diff --git a/.gitattributes b/.gitattributes

index a6344aac8c09253b3b630fb776ae94478aa0275b..4e86a1d05e648d0adf1095c7ec0a53273aa4ff2f 100644

--- a/.gitattributes

+++ b/.gitattributes

@@ -33,3 +33,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

*.zip filter=lfs diff=lfs merge=lfs -text

*.zst filter=lfs diff=lfs merge=lfs -text

*tfevents* filter=lfs diff=lfs merge=lfs -text

+model/sentence-transformer/unigram.json filter=lfs diff=lfs merge=lfs -text

diff --git a/README.md b/README.md

index e875eb33f11c20bb976082ca1649d55ba96bc33f..1a3a7f560989931b7971cc5b76658ac01d969702 100644

--- a/README.md

+++ b/README.md

@@ -1,8 +1,8 @@

---

title: LlamaIndexRAG

-emoji: 🚀

-colorFrom: blue

-colorTo: blue

+emoji: 🔥

+colorFrom: red

+colorTo: green

sdk: streamlit

sdk_version: 1.41.1

app_file: app.py

diff --git a/app.py b/app.py

new file mode 100644

index 0000000000000000000000000000000000000000..83190403ae03119d8943063979c66a45f07bfcaa

--- /dev/null

+++ b/app.py

@@ -0,0 +1,83 @@

+import streamlit as st

+from llama_index.core import VectorStoreIndex, SimpleDirectoryReader, Settings

+from llama_index.embeddings.huggingface import HuggingFaceEmbedding

+from llama_index.legacy.callbacks import CallbackManager

+from llama_index.llms.openai_like import OpenAILike

+

+# Create an instance of CallbackManager

+callback_manager = CallbackManager()

+

+api_base_url = "https://internlm-chat.intern-ai.org.cn/puyu/api/v1/"

+model = "internlm2.5-latest"

+api_key = "eyJ0eXBlIjoiSldUIiwiYWxnIjoiSFM1MTIifQ.eyJqdGkiOiIxNzAwMzA3OCIsInJvbCI6IlJPTEVfUkVHSVNURVIiLCJpc3MiOiJPcGVuWExhYiIsImlhdCI6MTczMzc1OTE4OCwiY2xpZW50SWQiOiJlYm1ydm9kNnlvMG5semFlazF5cCIsInBob25lIjoiMTg0MDY1MDk1NTgiLCJ1dWlkIjoiNzJhNWJhZmEtYjI3MC00NTVmLWJlYTgtYzViYmNiNDM3YWYxIiwiZW1haWwiOiIiLCJleHAiOjE3NDkzMTExODh9.D1Z-XG0-ZY7ZsSRAGq6V6hrzV8Pk9EgDHthSZZYCcK30-yiHPdRuB4kR4_96azWKbEO_G_nvhrNmKlHHCVAV1w"

+

+# api_base_url = "https://api.siliconflow.cn/v1"

+# model = "internlm/internlm2_5-7b-chat"

+# api_key = "请填写 API Key"

+

+llm =OpenAILike(model=model, api_base=api_base_url, api_key=api_key, is_chat_model=True,callback_manager=callback_manager)

+

+

+

+st.set_page_config(page_title="llama_index_demo", page_icon="🦜🔗")

+st.title("llama_index_demo")

+

+# 初始化模型

+@st.cache_resource

+def init_models():

+ embed_model = HuggingFaceEmbedding(

+ model_name="model/sentence-transformer"

+ )

+ Settings.embed_model = embed_model

+

+ #用初始化llm

+ Settings.llm = llm

+

+ documents = SimpleDirectoryReader("data").load_data()

+ index = VectorStoreIndex.from_documents(documents)

+ query_engine = index.as_query_engine()

+

+ return query_engine

+

+# 检查是否需要初始化模型

+if 'query_engine' not in st.session_state:

+ st.session_state['query_engine'] = init_models()

+

+def greet2(question):

+ response = st.session_state['query_engine'].query(question)

+ return response

+

+

+# Store LLM generated responses

+if "messages" not in st.session_state.keys():

+ st.session_state.messages = [{"role": "assistant", "content": "你好,我是你的助手,有什么我可以帮助你的吗?"}]

+

+ # Display or clear chat messages

+for message in st.session_state.messages:

+ with st.chat_message(message["role"]):

+ st.write(message["content"])

+

+def clear_chat_history():

+ st.session_state.messages = [{"role": "assistant", "content": "你好,我是你的助手,有什么我可以帮助你的吗?"}]

+

+st.sidebar.button('Clear Chat History', on_click=clear_chat_history)

+

+# Function for generating LLaMA2 response

+def generate_llama_index_response(prompt_input):

+ return greet2(prompt_input)

+

+# User-provided prompt

+if prompt := st.chat_input():

+ st.session_state.messages.append({"role": "user", "content": prompt})

+ with st.chat_message("user"):

+ st.write(prompt)

+

+# Gegenerate_llama_index_response last message is not from assistant

+if st.session_state.messages[-1]["role"] != "assistant":

+ with st.chat_message("assistant"):

+ with st.spinner("Thinking..."):

+ response = generate_llama_index_response(prompt)

+ placeholder = st.empty()

+ placeholder.markdown(response)

+ message = {"role": "assistant", "content": response}

+ st.session_state.messages.append(message)

diff --git a/data/README_zh-CN.md b/data/README_zh-CN.md

new file mode 100644

index 0000000000000000000000000000000000000000..f4f0b4b48d20bde7d916f534194671be09ca7d30

--- /dev/null

+++ b/data/README_zh-CN.md

@@ -0,0 +1,304 @@

+

+

+

+

+[](https://github.com/InternLM/xtuner/stargazers)

+[](https://github.com/InternLM/xtuner/blob/main/LICENSE)

+[](https://pypi.org/project/xtuner/)

+[](https://pypi.org/project/xtuner/)

+[](https://github.com/InternLM/xtuner/issues)

+[](https://github.com/InternLM/xtuner/issues)

+

+👋 加入我们:[](https://cdn.vansin.top/internlm/xtuner.jpg)

+[](https://twitter.com/intern_lm)

+[](https://discord.gg/xa29JuW87d)

+

+🔍 探索我们的模型:

+[](https://huggingface.co/xtuner)

+[](https://www.modelscope.cn/organization/xtuner)

+[](https://openxlab.org.cn/usercenter/xtuner)

+[](https://www.wisemodel.cn/organization/xtuner)

+

+[English](README.md) | 简体中文

+

+

+

+

+

+

+

+

+|

+ 模型

+ |

+

+ 数据集

+ |

+

+ 数据格式

+ |

+

+ 微调算法

+ |

+

+

+|

+

+ |

+

+

+ |

+

+

+ |

+

+

+ |

+

+

+

+

+## 🛠️ 快速上手

+

+### 安装

+

+- 推荐使用 conda 先构建一个 Python-3.10 的虚拟环境

+

+ ```bash

+ conda create --name xtuner-env python=3.10 -y

+ conda activate xtuner-env

+ ```

+

+- 通过 pip 安装 XTuner:

+

+ ```shell

+ pip install -U xtuner

+ ```

+

+ 亦可集成 DeepSpeed 安装:

+

+ ```shell

+ pip install -U 'xtuner[deepspeed]'

+ ```

+

+- 从源码安装 XTuner:

+

+ ```shell

+ git clone https://github.com/InternLM/xtuner.git

+ cd xtuner

+ pip install -e '.[all]'

+ ```

+

+### 微调

+

+XTuner 支持微调大语言模型。数据集预处理指南请查阅[文档](./docs/zh_cn/user_guides/dataset_prepare.md)。

+

+- **步骤 0**,准备配置文件。XTuner 提供多个开箱即用的配置文件,用户可以通过下列命令查看:

+

+ ```shell

+ xtuner list-cfg

+ ```

+

+ 或者,如果所提供的配置文件不能满足使用需求,请导出所提供的配置文件并进行相应更改:

+

+ ```shell

+ xtuner copy-cfg ${CONFIG_NAME} ${SAVE_PATH}

+ vi ${SAVE_PATH}/${CONFIG_NAME}_copy.py

+ ```

+

+- **步骤 1**,开始微调。

+

+ ```shell

+ xtuner train ${CONFIG_NAME_OR_PATH}

+ ```

+

+ 例如,我们可以利用 QLoRA 算法在 oasst1 数据集上微调 InternLM2.5-Chat-7B:

+

+ ```shell

+ # 单卡

+ xtuner train internlm2_5_chat_7b_qlora_oasst1_e3 --deepspeed deepspeed_zero2

+ # 多卡

+ (DIST) NPROC_PER_NODE=${GPU_NUM} xtuner train internlm2_5_chat_7b_qlora_oasst1_e3 --deepspeed deepspeed_zero2

+ (SLURM) srun ${SRUN_ARGS} xtuner train internlm2_5_chat_7b_qlora_oasst1_e3 --launcher slurm --deepspeed deepspeed_zero2

+ ```

+

+ - `--deepspeed` 表示使用 [DeepSpeed](https://github.com/microsoft/DeepSpeed) 🚀 来优化训练过程。XTuner 内置了多种策略,包括 ZeRO-1、ZeRO-2、ZeRO-3 等。如果用户期望关闭此功能,请直接移除此参数。

+

+ - 更多示例,请查阅[文档](./docs/zh_cn/user_guides/finetune.md)。

+

+- **步骤 2**,将保存的 PTH 模型(如果使用的DeepSpeed,则将会是一个文件夹)转换为 HuggingFace 模型:

+

+ ```shell

+ xtuner convert pth_to_hf ${CONFIG_NAME_OR_PATH} ${PTH} ${SAVE_PATH}

+ ```

+

+### 对话

+

+XTuner 提供与大语言模型对话的工具。

+

+```shell

+xtuner chat ${NAME_OR_PATH_TO_LLM} --adapter {NAME_OR_PATH_TO_ADAPTER} [optional arguments]

+```

+

+例如:

+

+与 InternLM2.5-Chat-7B 对话:

+

+```shell

+xtuner chat internlm/internlm2-chat-7b --prompt-template internlm2_chat

+```

+

+更多示例,请查阅[文档](./docs/zh_cn/user_guides/chat.md)。

+

+### 部署

+

+- **步骤 0**,将 HuggingFace adapter 合并到大语言模型:

+

+ ```shell

+ xtuner convert merge \

+ ${NAME_OR_PATH_TO_LLM} \

+ ${NAME_OR_PATH_TO_ADAPTER} \

+ ${SAVE_PATH} \

+ --max-shard-size 2GB

+ ```

+

+- **步骤 1**,使用任意推理框架部署微调后的大语言模型,例如 [LMDeploy](https://github.com/InternLM/lmdeploy) 🚀:

+

+ ```shell

+ pip install lmdeploy

+ python -m lmdeploy.pytorch.chat ${NAME_OR_PATH_TO_LLM} \

+ --max_new_tokens 256 \

+ --temperture 0.8 \

+ --top_p 0.95 \

+ --seed 0

+ ```

+

+ 🔥 追求速度更快、显存占用更低的推理?欢迎体验 [LMDeploy](https://github.com/InternLM/lmdeploy) 提供的 4-bit 量化!使用指南请见[文档](https://github.com/InternLM/lmdeploy/tree/main#quantization)。

+

+### 评测

+

+- 推荐使用一站式平台 [OpenCompass](https://github.com/InternLM/opencompass) 来评测大语言模型,其目前已涵盖 50+ 数据集的约 30 万条题目。

+

+## 🤝 贡献指南

+

+我们感谢所有的贡献者为改进和提升 XTuner 所作出的努力。请参考[贡献指南](.github/CONTRIBUTING.md)来了解参与项目贡献的相关指引。

+

+## 🎖️ 致谢

+

+- [Llama 2](https://github.com/facebookresearch/llama)

+- [DeepSpeed](https://github.com/microsoft/DeepSpeed)

+- [QLoRA](https://github.com/artidoro/qlora)

+- [LMDeploy](https://github.com/InternLM/lmdeploy)

+- [LLaVA](https://github.com/haotian-liu/LLaVA)

+

+## 🖊️ 引用

+

+```bibtex

+@misc{2023xtuner,

+ title={XTuner: A Toolkit for Efficiently Fine-tuning LLM},

+ author={XTuner Contributors},

+ howpublished = {\url{https://github.com/InternLM/xtuner}},

+ year={2023}

+}

+```

+

+## 开源许可证

+

+该项目采用 [Apache License 2.0 开源许可证](LICENSE)。同时,请遵守所使用的模型与数据集的许可证。

diff --git a/data/xtuner/.github/CONTRIBUTING.md b/data/xtuner/.github/CONTRIBUTING.md

new file mode 100644

index 0000000000000000000000000000000000000000..09eab9a11f2729b5bdebf211cc77fa44c62c104f

--- /dev/null

+++ b/data/xtuner/.github/CONTRIBUTING.md

@@ -0,0 +1,258 @@

+## Contributing to InternLM

+

+Welcome to the XTuner community! All kinds of contributions are welcomed, including but not limited to

+

+**Fix bug**

+

+You can directly post a Pull Request to fix typo in code or documents

+

+The steps to fix the bug of code implementation are as follows.

+

+1. If the modification involve significant changes, you should create an issue first and describe the error information and how to trigger the bug. Other developers will discuss with you and propose an proper solution.

+

+2. Posting a pull request after fixing the bug and adding corresponding unit test.

+

+**New Feature or Enhancement**

+

+1. If the modification involve significant changes, you should create an issue to discuss with our developers to propose an proper design.

+2. Post a Pull Request after implementing the new feature or enhancement and add corresponding unit test.

+

+**Document**

+

+You can directly post a pull request to fix documents. If you want to add a document, you should first create an issue to check if it is reasonable.

+

+### Pull Request Workflow

+

+If you're not familiar with Pull Request, don't worry! The following guidance will tell you how to create a Pull Request step by step. If you want to dive into the develop mode of Pull Request, you can refer to the [official documents](https://docs.github.com/en/github/collaborating-with-issues-and-pull-requests/about-pull-requests)

+

+#### 1. Fork and clone

+

+If you are posting a pull request for the first time, you should fork the XTuner repository by clicking the **Fork** button in the top right corner of the GitHub page, and the forked repository will appear under your GitHub profile.

+

+ +

+Then, you can clone the repositories to local:

+

+```shell

+git clone git@github.com:{username}/xtuner.git

+```

+

+After that, you should add official repository as the upstream repository

+

+```bash

+git remote add upstream git@github.com:InternLM/xtuner.git

+```

+

+Check whether remote repository has been added successfully by `git remote -v`

+

+```bash

+origin git@github.com:{username}/xtuner.git (fetch)

+origin git@github.com:{username}/xtuner.git (push)

+upstream git@github.com:InternLM/xtuner.git (fetch)

+upstream git@github.com:InternLM/xtuner.git (push)

+```

+

+> Here's a brief introduction to origin and upstream. When we use "git clone", we create an "origin" remote by default, which points to the repository cloned from. As for "upstream", we add it ourselves to point to the target repository. Of course, if you don't like the name "upstream", you could name it as you wish. Usually, we'll push the code to "origin". If the pushed code conflicts with the latest code in official("upstream"), we should pull the latest code from upstream to resolve the conflicts, and then push to "origin" again. The posted Pull Request will be updated automatically.

+

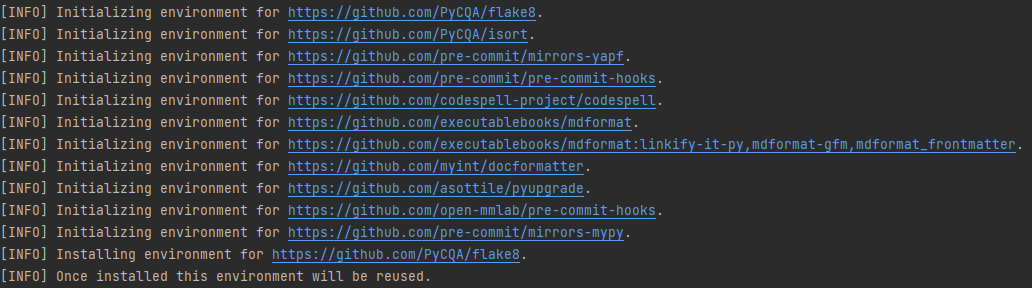

+#### 2. Configure pre-commit

+

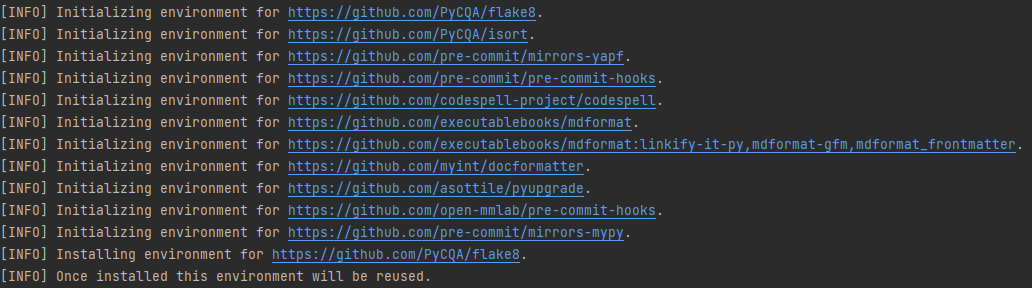

+You should configure [pre-commit](https://pre-commit.com/#intro) in the local development environment to make sure the code style matches that of InternLM. **Note**: The following code should be executed under the XTuner directory.

+

+```shell

+pip install -U pre-commit

+pre-commit install

+```

+

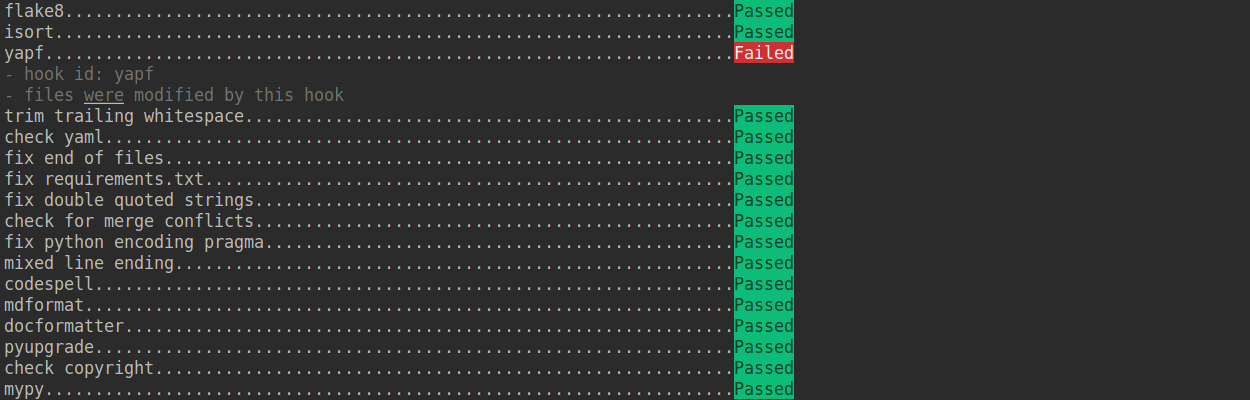

+Check that pre-commit is configured successfully, and install the hooks defined in `.pre-commit-config.yaml`.

+

+```shell

+pre-commit run --all-files

+```

+

+

+

+Then, you can clone the repositories to local:

+

+```shell

+git clone git@github.com:{username}/xtuner.git

+```

+

+After that, you should add official repository as the upstream repository

+

+```bash

+git remote add upstream git@github.com:InternLM/xtuner.git

+```

+

+Check whether remote repository has been added successfully by `git remote -v`

+

+```bash

+origin git@github.com:{username}/xtuner.git (fetch)

+origin git@github.com:{username}/xtuner.git (push)

+upstream git@github.com:InternLM/xtuner.git (fetch)

+upstream git@github.com:InternLM/xtuner.git (push)

+```

+

+> Here's a brief introduction to origin and upstream. When we use "git clone", we create an "origin" remote by default, which points to the repository cloned from. As for "upstream", we add it ourselves to point to the target repository. Of course, if you don't like the name "upstream", you could name it as you wish. Usually, we'll push the code to "origin". If the pushed code conflicts with the latest code in official("upstream"), we should pull the latest code from upstream to resolve the conflicts, and then push to "origin" again. The posted Pull Request will be updated automatically.

+

+#### 2. Configure pre-commit

+

+You should configure [pre-commit](https://pre-commit.com/#intro) in the local development environment to make sure the code style matches that of InternLM. **Note**: The following code should be executed under the XTuner directory.

+

+```shell

+pip install -U pre-commit

+pre-commit install

+```

+

+Check that pre-commit is configured successfully, and install the hooks defined in `.pre-commit-config.yaml`.

+

+```shell

+pre-commit run --all-files

+```

+

+ +

+

+

+ +

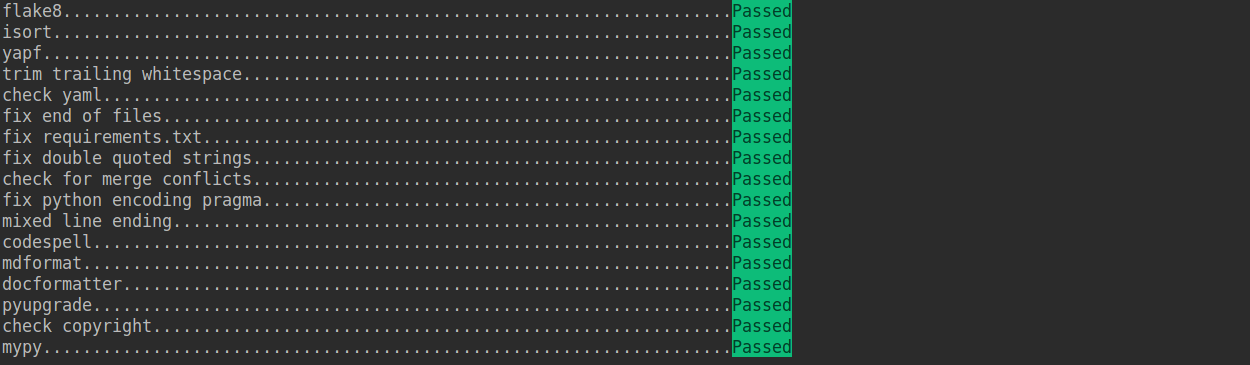

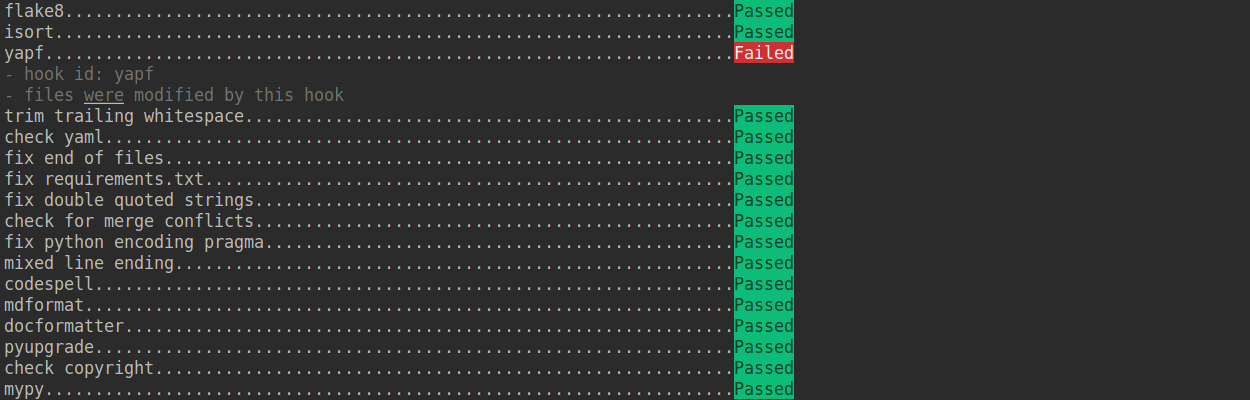

+If the installation process is interrupted, you can repeatedly run `pre-commit run ... ` to continue the installation.

+

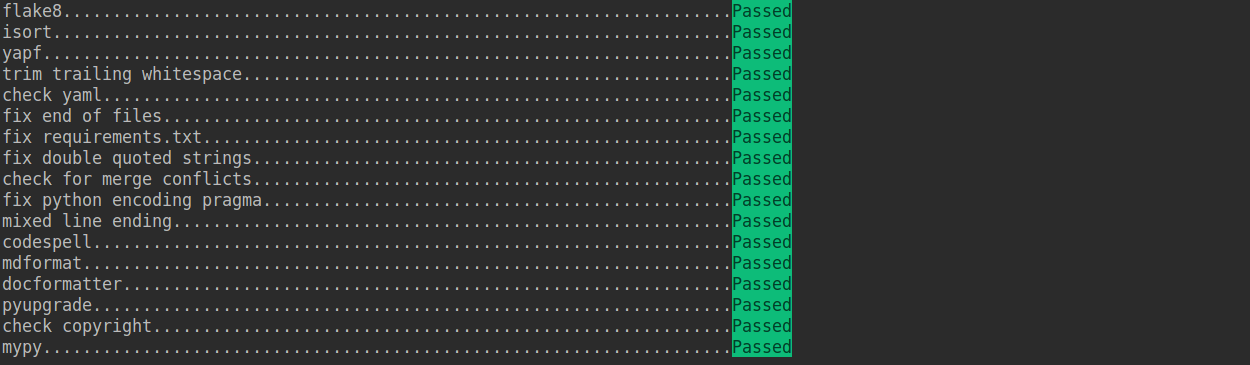

+If the code does not conform to the code style specification, pre-commit will raise a warning and fixes some of the errors automatically.

+

+

+

+If the installation process is interrupted, you can repeatedly run `pre-commit run ... ` to continue the installation.

+

+If the code does not conform to the code style specification, pre-commit will raise a warning and fixes some of the errors automatically.

+

+ +

+If we want to commit our code bypassing the pre-commit hook, we can use the `--no-verify` option(**only for temporarily commit**).

+

+```shell

+git commit -m "xxx" --no-verify

+```

+

+#### 3. Create a development branch

+

+After configuring the pre-commit, we should create a branch based on the master branch to develop the new feature or fix the bug. The proposed branch name is `username/pr_name`

+

+```shell

+git checkout -b yhc/refactor_contributing_doc

+```

+

+In subsequent development, if the master branch of the local repository is behind the master branch of "upstream", we need to pull the upstream for synchronization, and then execute the above command:

+

+```shell

+git pull upstream master

+```

+

+#### 4. Commit the code and pass the unit test

+

+- XTuner introduces mypy to do static type checking to increase the robustness of the code. Therefore, we need to add Type Hints to our code and pass the mypy check. If you are not familiar with Type Hints, you can refer to [this tutorial](https://docs.python.org/3/library/typing.html).

+

+- The committed code should pass through the unit test

+

+ ```shell

+ # Pass all unit tests

+ pytest tests

+

+ # Pass the unit test of runner

+ pytest tests/test_runner/test_runner.py

+ ```

+

+ If the unit test fails for lack of dependencies, you can install the dependencies referring to the [guidance](#unit-test)

+

+- If the documents are modified/added, we should check the rendering result referring to [guidance](#document-rendering)

+

+#### 5. Push the code to remote

+

+We could push the local commits to remote after passing through the check of unit test and pre-commit. You can associate the local branch with remote branch by adding `-u` option.

+

+```shell

+git push -u origin {branch_name}

+```

+

+This will allow you to use the `git push` command to push code directly next time, without having to specify a branch or the remote repository.

+

+#### 6. Create a Pull Request

+

+(1) Create a pull request in GitHub's Pull request interface

+

+

+

+If we want to commit our code bypassing the pre-commit hook, we can use the `--no-verify` option(**only for temporarily commit**).

+

+```shell

+git commit -m "xxx" --no-verify

+```

+

+#### 3. Create a development branch

+

+After configuring the pre-commit, we should create a branch based on the master branch to develop the new feature or fix the bug. The proposed branch name is `username/pr_name`

+

+```shell

+git checkout -b yhc/refactor_contributing_doc

+```

+

+In subsequent development, if the master branch of the local repository is behind the master branch of "upstream", we need to pull the upstream for synchronization, and then execute the above command:

+

+```shell

+git pull upstream master

+```

+

+#### 4. Commit the code and pass the unit test

+

+- XTuner introduces mypy to do static type checking to increase the robustness of the code. Therefore, we need to add Type Hints to our code and pass the mypy check. If you are not familiar with Type Hints, you can refer to [this tutorial](https://docs.python.org/3/library/typing.html).

+

+- The committed code should pass through the unit test

+

+ ```shell

+ # Pass all unit tests

+ pytest tests

+

+ # Pass the unit test of runner

+ pytest tests/test_runner/test_runner.py

+ ```

+

+ If the unit test fails for lack of dependencies, you can install the dependencies referring to the [guidance](#unit-test)

+

+- If the documents are modified/added, we should check the rendering result referring to [guidance](#document-rendering)

+

+#### 5. Push the code to remote

+

+We could push the local commits to remote after passing through the check of unit test and pre-commit. You can associate the local branch with remote branch by adding `-u` option.

+

+```shell

+git push -u origin {branch_name}

+```

+

+This will allow you to use the `git push` command to push code directly next time, without having to specify a branch or the remote repository.

+

+#### 6. Create a Pull Request

+

+(1) Create a pull request in GitHub's Pull request interface

+

+ +

+(2) Modify the PR description according to the guidelines so that other developers can better understand your changes

+

+

+

+(2) Modify the PR description according to the guidelines so that other developers can better understand your changes

+

+ +

+Find more details about Pull Request description in [pull request guidelines](#pr-specs).

+

+**note**

+

+(a) The Pull Request description should contain the reason for the change, the content of the change, and the impact of the change, and be associated with the relevant Issue (see [documentation](https://docs.github.com/en/issues/tracking-your-work-with-issues/linking-a-pull-request-to-an-issue))

+

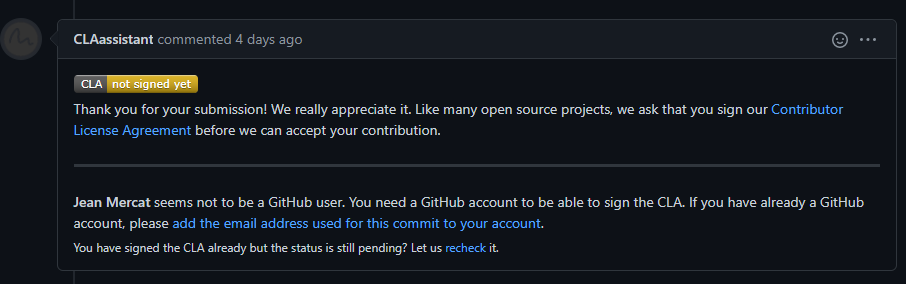

+(b) If it is your first contribution, please sign the CLA

+

+

+

+Find more details about Pull Request description in [pull request guidelines](#pr-specs).

+

+**note**

+

+(a) The Pull Request description should contain the reason for the change, the content of the change, and the impact of the change, and be associated with the relevant Issue (see [documentation](https://docs.github.com/en/issues/tracking-your-work-with-issues/linking-a-pull-request-to-an-issue))

+

+(b) If it is your first contribution, please sign the CLA

+

+ +

+(c) Check whether the Pull Request pass through the CI

+

+

+

+(c) Check whether the Pull Request pass through the CI

+

+ +

+XTuner will run unit test for the posted Pull Request on different platforms (Linux, Window, Mac), based on different versions of Python, PyTorch, CUDA to make sure the code is correct. We can see the specific test information by clicking `Details` in the above image so that we can modify the code.

+

+(3) If the Pull Request passes the CI, then you can wait for the review from other developers. You'll modify the code based on the reviewer's comments, and repeat the steps [4](#4-commit-the-code-and-pass-the-unit-test)-[5](#5-push-the-code-to-remote) until all reviewers approve it. Then, we will merge it ASAP.

+

+

+

+XTuner will run unit test for the posted Pull Request on different platforms (Linux, Window, Mac), based on different versions of Python, PyTorch, CUDA to make sure the code is correct. We can see the specific test information by clicking `Details` in the above image so that we can modify the code.

+

+(3) If the Pull Request passes the CI, then you can wait for the review from other developers. You'll modify the code based on the reviewer's comments, and repeat the steps [4](#4-commit-the-code-and-pass-the-unit-test)-[5](#5-push-the-code-to-remote) until all reviewers approve it. Then, we will merge it ASAP.

+

+ +

+#### 7. Resolve conflicts

+

+If your local branch conflicts with the latest master branch of "upstream", you'll need to resolove them. There are two ways to do this:

+

+```shell

+git fetch --all --prune

+git rebase upstream/master

+```

+

+or

+

+```shell

+git fetch --all --prune

+git merge upstream/master

+```

+

+If you are very good at handling conflicts, then you can use rebase to resolve conflicts, as this will keep your commit logs tidy. If you are not familiar with `rebase`, then you can use `merge` to resolve conflicts.

+

+### Guidance

+

+#### Unit test

+

+If you cannot run the unit test of some modules for lacking of some dependencies, such as [video](https://github.com/open-mmlab/mmcv/tree/master/mmcv/video) module, you can try to install the following dependencies:

+

+```shell

+# Linux

+sudo apt-get update -y

+sudo apt-get install -y libturbojpeg

+sudo apt-get install -y ffmpeg

+

+# Windows

+conda install ffmpeg

+```

+

+We should also make sure the committed code will not decrease the coverage of unit test, we could run the following command to check the coverage of unit test:

+

+```shell

+python -m coverage run -m pytest /path/to/test_file

+python -m coverage html

+# check file in htmlcov/index.html

+```

+

+#### Document rendering

+

+If the documents are modified/added, we should check the rendering result. We could install the dependencies and run the following command to render the documents and check the results:

+

+```shell

+pip install -r requirements/docs.txt

+cd docs/zh_cn/

+# or docs/en

+make html

+# check file in ./docs/zh_cn/_build/html/index.html

+```

+

+### Code style

+

+#### Python

+

+We adopt [PEP8](https://www.python.org/dev/peps/pep-0008/) as the preferred code style.

+

+We use the following tools for linting and formatting:

+

+- [flake8](https://github.com/PyCQA/flake8): A wrapper around some linter tools.

+- [isort](https://github.com/timothycrosley/isort): A Python utility to sort imports.

+- [yapf](https://github.com/google/yapf): A formatter for Python files.

+- [codespell](https://github.com/codespell-project/codespell): A Python utility to fix common misspellings in text files.

+- [mdformat](https://github.com/executablebooks/mdformat): Mdformat is an opinionated Markdown formatter that can be used to enforce a consistent style in Markdown files.

+- [docformatter](https://github.com/myint/docformatter): A formatter to format docstring.

+

+Style configurations of yapf and isort can be found in [setup.cfg](../setup.cfg).

+

+We use [pre-commit hook](https://pre-commit.com/) that checks and formats for `flake8`, `yapf`, `isort`, `trailing whitespaces`, `markdown files`,

+fixes `end-of-files`, `double-quoted-strings`, `python-encoding-pragma`, `mixed-line-ending`, sorts `requirments.txt` automatically on every commit.

+The config for a pre-commit hook is stored in [.pre-commit-config](../.pre-commit-config.yaml).

+

+#### C++ and CUDA

+

+We follow the [Google C++ Style Guide](https://google.github.io/styleguide/cppguide.html).

+

+### PR Specs

+

+1. Use [pre-commit](https://pre-commit.com) hook to avoid issues of code style

+

+2. One short-time branch should be matched with only one PR

+

+3. Accomplish a detailed change in one PR. Avoid large PR

+

+ - Bad: Support Faster R-CNN

+ - Acceptable: Add a box head to Faster R-CNN

+ - Good: Add a parameter to box head to support custom conv-layer number

+

+4. Provide clear and significant commit message

+

+5. Provide clear and meaningful PR description

+

+ - Task name should be clarified in title. The general format is: \[Prefix\] Short description of the PR (Suffix)

+ - Prefix: add new feature \[Feature\], fix bug \[Fix\], related to documents \[Docs\], in developing \[WIP\] (which will not be reviewed temporarily)

+ - Introduce main changes, results and influences on other modules in short description

+ - Associate related issues and pull requests with a milestone

diff --git a/data/xtuner/.github/workflows/deploy.yml b/data/xtuner/.github/workflows/deploy.yml

new file mode 100644

index 0000000000000000000000000000000000000000..b2c6f0bc208ca0f3d2aba1d4dc04d97fb51cacbd

--- /dev/null

+++ b/data/xtuner/.github/workflows/deploy.yml

@@ -0,0 +1,26 @@

+name: deploy

+

+on: push

+

+concurrency:

+ group: ${{ github.workflow }}-${{ github.ref }}

+ cancel-in-progress: true

+

+jobs:

+ build-n-publish:

+ runs-on: ubuntu-latest

+ if: startsWith(github.event.ref, 'refs/tags')

+ steps:

+ - uses: actions/checkout@v2

+ - name: Set up Python 3.8

+ uses: actions/setup-python@v2

+ with:

+ python-version: 3.8

+ - name: Build XTuner

+ run: |

+ pip install wheel

+ python setup.py sdist bdist_wheel

+ - name: Publish distribution to PyPI

+ run: |

+ pip install twine

+ twine upload dist/* -u __token__ -p ${{ secrets.pypi_password }}

diff --git a/data/xtuner/.github/workflows/lint.yml b/data/xtuner/.github/workflows/lint.yml

new file mode 100644

index 0000000000000000000000000000000000000000..74a733eb81e8e3e3b7c6ca1c08de8856d6cfb81e

--- /dev/null

+++ b/data/xtuner/.github/workflows/lint.yml

@@ -0,0 +1,23 @@

+name: lint

+

+on: [push, pull_request]

+

+concurrency:

+ group: ${{ github.workflow }}-${{ github.ref }}

+ cancel-in-progress: true

+

+jobs:

+ lint:

+ runs-on: ubuntu-latest

+ steps:

+ - uses: actions/checkout@v2

+ - name: Set up Python 3.8

+ uses: actions/setup-python@v2

+ with:

+ python-version: 3.8

+ - name: Install pre-commit hook

+ run: |

+ pip install pre-commit

+ pre-commit install

+ - name: Linting

+ run: pre-commit run --all-files

diff --git a/data/xtuner/.gitignore b/data/xtuner/.gitignore

new file mode 100644

index 0000000000000000000000000000000000000000..ffe3444b8cdb2ec3e6791d047d0593fcf9d20d41

--- /dev/null

+++ b/data/xtuner/.gitignore

@@ -0,0 +1,124 @@

+# Byte-compiled / optimized / DLL files

+__pycache__/

+*.py[cod]

+*$py.class

+

+# C extensions

+*.so

+

+# Distribution / packaging

+.Python

+build/

+develop-eggs/

+dist/

+downloads/

+eggs/

+.eggs/

+lib/

+lib64/

+parts/

+sdist/

+var/

+wheels/

+*.egg-info/

+.installed.cfg

+*.egg

+MANIFEST

+

+# PyInstaller

+# Usually these files are written by a python script from a template

+# before PyInstaller builds the exe, so as to inject date/other infos into it.

+*.manifest

+*.spec

+

+# Installer logs

+pip-log.txt

+pip-delete-this-directory.txt

+

+# Unit test / coverage reports

+htmlcov/

+.tox/

+.coverage

+.coverage.*

+.cache

+nosetests.xml

+coverage.xml

+*.cover

+.hypothesis/

+.pytest_cache/

+

+# Translations

+*.mo

+*.pot

+

+# Django stuff:

+*.log

+local_settings.py

+db.sqlite3

+

+# Flask stuff:

+instance/

+.webassets-cache

+

+# Scrapy stuff:

+.scrapy

+

+# Sphinx documentation

+docs/*/_build/

+

+# PyBuilder

+target/

+

+# Jupyter Notebook

+.ipynb_checkpoints

+

+# pyenv

+.python-version

+

+# celery beat schedule file

+celerybeat-schedule

+

+# SageMath parsed files

+*.sage.py

+

+# Environments

+.env

+.venv

+env/

+venv/

+ENV/

+env.bak/

+venv.bak/

+

+# Spyder project settings

+.spyderproject

+.spyproject

+

+# Rope project settings

+.ropeproject

+

+# mkdocs documentation

+/site

+

+# mypy

+.mypy_cache/

+

+# custom

+data/

+data

+.vscode

+.idea

+.DS_Store

+*.pkl

+*.pkl.json

+*.log.json

+work_dirs/

+

+# Pytorch

+*.pth

+*.py~

+*.sh~

+

+# srun

+*.out

+batchscript-*

diff --git a/data/xtuner/.owners.yml b/data/xtuner/.owners.yml

new file mode 100644

index 0000000000000000000000000000000000000000..996ae4c69c03821b2b79a1b7a4233988cf0623ee

--- /dev/null

+++ b/data/xtuner/.owners.yml

@@ -0,0 +1,8 @@

+assign:

+ issues: disabled

+ pull_requests: disabled

+ strategy:

+ random

+ # daily-shift-based

+ schedule:

+ '*/1 * * * *'

diff --git a/data/xtuner/.pre-commit-config-zh-cn.yaml b/data/xtuner/.pre-commit-config-zh-cn.yaml

new file mode 100644

index 0000000000000000000000000000000000000000..4b9f51976e4b46db4db69952f437e43d72581070

--- /dev/null

+++ b/data/xtuner/.pre-commit-config-zh-cn.yaml

@@ -0,0 +1,51 @@

+exclude: ^tests/data/|^xtuner/tools/model_converters/modeling_internlm2_reward/

+repos:

+ - repo: https://gitee.com/openmmlab/mirrors-flake8

+ rev: 5.0.4

+ hooks:

+ - id: flake8

+ args: ["--exclude=xtuner/model/transformers_models/*"]

+ - repo: https://gitee.com/openmmlab/mirrors-isort

+ rev: 5.11.5

+ hooks:

+ - id: isort

+ - repo: https://gitee.com/openmmlab/mirrors-yapf

+ rev: v0.32.0

+ hooks:

+ - id: yapf

+ - repo: https://gitee.com/openmmlab/mirrors-pre-commit-hooks

+ rev: v4.3.0

+ hooks:

+ - id: trailing-whitespace

+ - id: check-yaml

+ - id: end-of-file-fixer

+ - id: requirements-txt-fixer

+ - id: double-quote-string-fixer

+ - id: check-merge-conflict

+ - id: fix-encoding-pragma

+ args: ["--remove"]

+ - id: mixed-line-ending

+ args: ["--fix=lf"]

+ - repo: https://gitee.com/openmmlab/mirrors-codespell

+ rev: v2.2.1

+ hooks:

+ - id: codespell

+ - repo: https://gitee.com/openmmlab/mirrors-mdformat

+ rev: 0.7.9

+ hooks:

+ - id: mdformat

+ args: ["--number"]

+ additional_dependencies:

+ - mdformat-openmmlab

+ - mdformat_frontmatter

+ - linkify-it-py

+ - repo: https://gitee.com/openmmlab/mirrors-docformatter

+ rev: v1.3.1

+ hooks:

+ - id: docformatter

+ args: ["--in-place", "--wrap-descriptions", "79"]

+ - repo: https://github.com/asottile/pyupgrade

+ rev: v3.0.0

+ hooks:

+ - id: pyupgrade

+ args: ["--py36-plus"]

diff --git a/data/xtuner/.pre-commit-config.yaml b/data/xtuner/.pre-commit-config.yaml

new file mode 100644

index 0000000000000000000000000000000000000000..f6bbfd6339aeba49dbae8a0edc425a6e3f0c8eb2

--- /dev/null

+++ b/data/xtuner/.pre-commit-config.yaml

@@ -0,0 +1,53 @@

+exclude: ^tests/data/|^xtuner/tools/model_converters/modeling_internlm2_reward/

+repos:

+ - repo: https://github.com/PyCQA/flake8

+ rev: 5.0.4

+ hooks:

+ - id: flake8

+ args: ["--exclude=xtuner/model/transformers_models/*"]

+ - repo: https://github.com/PyCQA/isort

+ rev: 5.11.5

+ hooks:

+ - id: isort

+ - repo: https://github.com/pre-commit/mirrors-yapf

+ rev: v0.32.0

+ hooks:

+ - id: yapf

+ exclude: 'xtuner/parallel/sequence/__init__.py'

+ - repo: https://github.com/pre-commit/pre-commit-hooks

+ rev: v4.3.0

+ hooks:

+ - id: trailing-whitespace

+ - id: check-yaml

+ - id: end-of-file-fixer

+ - id: requirements-txt-fixer

+ - id: double-quote-string-fixer

+ - id: check-merge-conflict

+ - id: fix-encoding-pragma

+ args: ["--remove"]

+ - id: mixed-line-ending

+ args: ["--fix=lf"]

+ - repo: https://github.com/codespell-project/codespell

+ rev: v2.2.1

+ hooks:

+ - id: codespell

+ - repo: https://github.com/executablebooks/mdformat

+ rev: 0.7.9

+ hooks:

+ - id: mdformat

+ args: ["--number"]

+ additional_dependencies:

+ - mdformat-openmmlab

+ - mdformat_frontmatter

+ - linkify-it-py

+ exclude: 'docs/zh_cn/user_guides/sequence_parallel.md'

+ - repo: https://github.com/myint/docformatter

+ rev: v1.3.1

+ hooks:

+ - id: docformatter

+ args: ["--in-place", "--wrap-descriptions", "79"]

+ - repo: https://github.com/asottile/pyupgrade

+ rev: v3.0.0

+ hooks:

+ - id: pyupgrade

+ args: ["--py36-plus"]

diff --git a/data/xtuner/LICENSE b/data/xtuner/LICENSE

new file mode 100644

index 0000000000000000000000000000000000000000..261eeb9e9f8b2b4b0d119366dda99c6fd7d35c64

--- /dev/null

+++ b/data/xtuner/LICENSE

@@ -0,0 +1,201 @@

+ Apache License

+ Version 2.0, January 2004

+ http://www.apache.org/licenses/

+

+ TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

+

+ 1. Definitions.

+

+ "License" shall mean the terms and conditions for use, reproduction,

+ and distribution as defined by Sections 1 through 9 of this document.

+

+ "Licensor" shall mean the copyright owner or entity authorized by

+ the copyright owner that is granting the License.

+

+ "Legal Entity" shall mean the union of the acting entity and all

+ other entities that control, are controlled by, or are under common

+ control with that entity. For the purposes of this definition,

+ "control" means (i) the power, direct or indirect, to cause the

+ direction or management of such entity, whether by contract or

+ otherwise, or (ii) ownership of fifty percent (50%) or more of the

+ outstanding shares, or (iii) beneficial ownership of such entity.

+

+ "You" (or "Your") shall mean an individual or Legal Entity

+ exercising permissions granted by this License.

+

+ "Source" form shall mean the preferred form for making modifications,

+ including but not limited to software source code, documentation

+ source, and configuration files.

+

+ "Object" form shall mean any form resulting from mechanical

+ transformation or translation of a Source form, including but

+ not limited to compiled object code, generated documentation,

+ and conversions to other media types.

+

+ "Work" shall mean the work of authorship, whether in Source or

+ Object form, made available under the License, as indicated by a

+ copyright notice that is included in or attached to the work

+ (an example is provided in the Appendix below).

+

+ "Derivative Works" shall mean any work, whether in Source or Object

+ form, that is based on (or derived from) the Work and for which the

+ editorial revisions, annotations, elaborations, or other modifications

+ represent, as a whole, an original work of authorship. For the purposes

+ of this License, Derivative Works shall not include works that remain

+ separable from, or merely link (or bind by name) to the interfaces of,

+ the Work and Derivative Works thereof.

+

+ "Contribution" shall mean any work of authorship, including

+ the original version of the Work and any modifications or additions

+ to that Work or Derivative Works thereof, that is intentionally

+ submitted to Licensor for inclusion in the Work by the copyright owner

+ or by an individual or Legal Entity authorized to submit on behalf of

+ the copyright owner. For the purposes of this definition, "submitted"

+ means any form of electronic, verbal, or written communication sent

+ to the Licensor or its representatives, including but not limited to

+ communication on electronic mailing lists, source code control systems,

+ and issue tracking systems that are managed by, or on behalf of, the

+ Licensor for the purpose of discussing and improving the Work, but

+ excluding communication that is conspicuously marked or otherwise

+ designated in writing by the copyright owner as "Not a Contribution."

+

+ "Contributor" shall mean Licensor and any individual or Legal Entity

+ on behalf of whom a Contribution has been received by Licensor and

+ subsequently incorporated within the Work.

+

+ 2. Grant of Copyright License. Subject to the terms and conditions of

+ this License, each Contributor hereby grants to You a perpetual,

+ worldwide, non-exclusive, no-charge, royalty-free, irrevocable

+ copyright license to reproduce, prepare Derivative Works of,

+ publicly display, publicly perform, sublicense, and distribute the

+ Work and such Derivative Works in Source or Object form.

+

+ 3. Grant of Patent License. Subject to the terms and conditions of

+ this License, each Contributor hereby grants to You a perpetual,

+ worldwide, non-exclusive, no-charge, royalty-free, irrevocable

+ (except as stated in this section) patent license to make, have made,

+ use, offer to sell, sell, import, and otherwise transfer the Work,

+ where such license applies only to those patent claims licensable

+ by such Contributor that are necessarily infringed by their

+ Contribution(s) alone or by combination of their Contribution(s)

+ with the Work to which such Contribution(s) was submitted. If You

+ institute patent litigation against any entity (including a

+ cross-claim or counterclaim in a lawsuit) alleging that the Work

+ or a Contribution incorporated within the Work constitutes direct

+ or contributory patent infringement, then any patent licenses

+ granted to You under this License for that Work shall terminate

+ as of the date such litigation is filed.

+

+ 4. Redistribution. You may reproduce and distribute copies of the

+ Work or Derivative Works thereof in any medium, with or without

+ modifications, and in Source or Object form, provided that You

+ meet the following conditions:

+

+ (a) You must give any other recipients of the Work or

+ Derivative Works a copy of this License; and

+

+ (b) You must cause any modified files to carry prominent notices

+ stating that You changed the files; and

+

+ (c) You must retain, in the Source form of any Derivative Works

+ that You distribute, all copyright, patent, trademark, and

+ attribution notices from the Source form of the Work,

+ excluding those notices that do not pertain to any part of

+ the Derivative Works; and

+

+ (d) If the Work includes a "NOTICE" text file as part of its

+ distribution, then any Derivative Works that You distribute must

+ include a readable copy of the attribution notices contained

+ within such NOTICE file, excluding those notices that do not

+ pertain to any part of the Derivative Works, in at least one

+ of the following places: within a NOTICE text file distributed

+ as part of the Derivative Works; within the Source form or

+ documentation, if provided along with the Derivative Works; or,

+ within a display generated by the Derivative Works, if and

+ wherever such third-party notices normally appear. The contents

+ of the NOTICE file are for informational purposes only and

+ do not modify the License. You may add Your own attribution

+ notices within Derivative Works that You distribute, alongside

+ or as an addendum to the NOTICE text from the Work, provided

+ that such additional attribution notices cannot be construed

+ as modifying the License.

+

+ You may add Your own copyright statement to Your modifications and

+ may provide additional or different license terms and conditions

+ for use, reproduction, or distribution of Your modifications, or

+ for any such Derivative Works as a whole, provided Your use,

+ reproduction, and distribution of the Work otherwise complies with

+ the conditions stated in this License.

+

+ 5. Submission of Contributions. Unless You explicitly state otherwise,

+ any Contribution intentionally submitted for inclusion in the Work

+ by You to the Licensor shall be under the terms and conditions of

+ this License, without any additional terms or conditions.

+ Notwithstanding the above, nothing herein shall supersede or modify

+ the terms of any separate license agreement you may have executed

+ with Licensor regarding such Contributions.

+

+ 6. Trademarks. This License does not grant permission to use the trade

+ names, trademarks, service marks, or product names of the Licensor,

+ except as required for reasonable and customary use in describing the

+ origin of the Work and reproducing the content of the NOTICE file.

+

+ 7. Disclaimer of Warranty. Unless required by applicable law or

+ agreed to in writing, Licensor provides the Work (and each

+ Contributor provides its Contributions) on an "AS IS" BASIS,

+ WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

+ implied, including, without limitation, any warranties or conditions

+ of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

+ PARTICULAR PURPOSE. You are solely responsible for determining the

+ appropriateness of using or redistributing the Work and assume any

+ risks associated with Your exercise of permissions under this License.

+

+ 8. Limitation of Liability. In no event and under no legal theory,

+ whether in tort (including negligence), contract, or otherwise,

+ unless required by applicable law (such as deliberate and grossly

+ negligent acts) or agreed to in writing, shall any Contributor be

+ liable to You for damages, including any direct, indirect, special,

+ incidental, or consequential damages of any character arising as a

+ result of this License or out of the use or inability to use the

+ Work (including but not limited to damages for loss of goodwill,

+ work stoppage, computer failure or malfunction, or any and all

+ other commercial damages or losses), even if such Contributor

+ has been advised of the possibility of such damages.

+

+ 9. Accepting Warranty or Additional Liability. While redistributing

+ the Work or Derivative Works thereof, You may choose to offer,

+ and charge a fee for, acceptance of support, warranty, indemnity,

+ or other liability obligations and/or rights consistent with this

+ License. However, in accepting such obligations, You may act only

+ on Your own behalf and on Your sole responsibility, not on behalf

+ of any other Contributor, and only if You agree to indemnify,

+ defend, and hold each Contributor harmless for any liability

+ incurred by, or claims asserted against, such Contributor by reason

+ of your accepting any such warranty or additional liability.

+

+ END OF TERMS AND CONDITIONS

+

+ APPENDIX: How to apply the Apache License to your work.

+

+ To apply the Apache License to your work, attach the following

+ boilerplate notice, with the fields enclosed by brackets "[]"

+ replaced with your own identifying information. (Don't include

+ the brackets!) The text should be enclosed in the appropriate

+ comment syntax for the file format. We also recommend that a

+ file or class name and description of purpose be included on the

+ same "printed page" as the copyright notice for easier

+ identification within third-party archives.

+

+ Copyright [yyyy] [name of copyright owner]

+

+ Licensed under the Apache License, Version 2.0 (the "License");

+ you may not use this file except in compliance with the License.

+ You may obtain a copy of the License at

+

+ http://www.apache.org/licenses/LICENSE-2.0

+

+ Unless required by applicable law or agreed to in writing, software

+ distributed under the License is distributed on an "AS IS" BASIS,

+ WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ See the License for the specific language governing permissions and

+ limitations under the License.

diff --git a/data/xtuner/MANIFEST.in b/data/xtuner/MANIFEST.in

new file mode 100644

index 0000000000000000000000000000000000000000..36e1610bf8093a8355a58d7d9779697a64931313

--- /dev/null

+++ b/data/xtuner/MANIFEST.in

@@ -0,0 +1,2 @@

+recursive-include xtuner/configs *.py *.yml *.json

+recursive-include xtuner/tools *.sh *.py

diff --git a/data/xtuner/README.md b/data/xtuner/README.md

new file mode 100644

index 0000000000000000000000000000000000000000..263d300c7a17778e3be4ff6f64cd262995f98527

--- /dev/null

+++ b/data/xtuner/README.md

@@ -0,0 +1,302 @@

+

+

+#### 7. Resolve conflicts

+

+If your local branch conflicts with the latest master branch of "upstream", you'll need to resolove them. There are two ways to do this:

+

+```shell

+git fetch --all --prune

+git rebase upstream/master

+```

+

+or

+

+```shell

+git fetch --all --prune

+git merge upstream/master

+```

+

+If you are very good at handling conflicts, then you can use rebase to resolve conflicts, as this will keep your commit logs tidy. If you are not familiar with `rebase`, then you can use `merge` to resolve conflicts.

+

+### Guidance

+

+#### Unit test

+

+If you cannot run the unit test of some modules for lacking of some dependencies, such as [video](https://github.com/open-mmlab/mmcv/tree/master/mmcv/video) module, you can try to install the following dependencies:

+

+```shell

+# Linux

+sudo apt-get update -y

+sudo apt-get install -y libturbojpeg

+sudo apt-get install -y ffmpeg

+

+# Windows

+conda install ffmpeg

+```

+

+We should also make sure the committed code will not decrease the coverage of unit test, we could run the following command to check the coverage of unit test:

+

+```shell

+python -m coverage run -m pytest /path/to/test_file

+python -m coverage html

+# check file in htmlcov/index.html

+```

+

+#### Document rendering

+

+If the documents are modified/added, we should check the rendering result. We could install the dependencies and run the following command to render the documents and check the results:

+

+```shell

+pip install -r requirements/docs.txt

+cd docs/zh_cn/

+# or docs/en

+make html

+# check file in ./docs/zh_cn/_build/html/index.html

+```

+

+### Code style

+

+#### Python

+

+We adopt [PEP8](https://www.python.org/dev/peps/pep-0008/) as the preferred code style.

+

+We use the following tools for linting and formatting:

+

+- [flake8](https://github.com/PyCQA/flake8): A wrapper around some linter tools.

+- [isort](https://github.com/timothycrosley/isort): A Python utility to sort imports.

+- [yapf](https://github.com/google/yapf): A formatter for Python files.

+- [codespell](https://github.com/codespell-project/codespell): A Python utility to fix common misspellings in text files.

+- [mdformat](https://github.com/executablebooks/mdformat): Mdformat is an opinionated Markdown formatter that can be used to enforce a consistent style in Markdown files.

+- [docformatter](https://github.com/myint/docformatter): A formatter to format docstring.

+

+Style configurations of yapf and isort can be found in [setup.cfg](../setup.cfg).

+

+We use [pre-commit hook](https://pre-commit.com/) that checks and formats for `flake8`, `yapf`, `isort`, `trailing whitespaces`, `markdown files`,

+fixes `end-of-files`, `double-quoted-strings`, `python-encoding-pragma`, `mixed-line-ending`, sorts `requirments.txt` automatically on every commit.

+The config for a pre-commit hook is stored in [.pre-commit-config](../.pre-commit-config.yaml).

+

+#### C++ and CUDA

+

+We follow the [Google C++ Style Guide](https://google.github.io/styleguide/cppguide.html).

+

+### PR Specs

+

+1. Use [pre-commit](https://pre-commit.com) hook to avoid issues of code style

+

+2. One short-time branch should be matched with only one PR

+

+3. Accomplish a detailed change in one PR. Avoid large PR

+

+ - Bad: Support Faster R-CNN

+ - Acceptable: Add a box head to Faster R-CNN

+ - Good: Add a parameter to box head to support custom conv-layer number

+

+4. Provide clear and significant commit message

+

+5. Provide clear and meaningful PR description

+

+ - Task name should be clarified in title. The general format is: \[Prefix\] Short description of the PR (Suffix)

+ - Prefix: add new feature \[Feature\], fix bug \[Fix\], related to documents \[Docs\], in developing \[WIP\] (which will not be reviewed temporarily)

+ - Introduce main changes, results and influences on other modules in short description

+ - Associate related issues and pull requests with a milestone

diff --git a/data/xtuner/.github/workflows/deploy.yml b/data/xtuner/.github/workflows/deploy.yml

new file mode 100644

index 0000000000000000000000000000000000000000..b2c6f0bc208ca0f3d2aba1d4dc04d97fb51cacbd

--- /dev/null

+++ b/data/xtuner/.github/workflows/deploy.yml

@@ -0,0 +1,26 @@

+name: deploy

+

+on: push

+

+concurrency:

+ group: ${{ github.workflow }}-${{ github.ref }}

+ cancel-in-progress: true

+

+jobs:

+ build-n-publish:

+ runs-on: ubuntu-latest

+ if: startsWith(github.event.ref, 'refs/tags')

+ steps:

+ - uses: actions/checkout@v2

+ - name: Set up Python 3.8

+ uses: actions/setup-python@v2

+ with:

+ python-version: 3.8

+ - name: Build XTuner

+ run: |

+ pip install wheel

+ python setup.py sdist bdist_wheel

+ - name: Publish distribution to PyPI

+ run: |

+ pip install twine

+ twine upload dist/* -u __token__ -p ${{ secrets.pypi_password }}

diff --git a/data/xtuner/.github/workflows/lint.yml b/data/xtuner/.github/workflows/lint.yml

new file mode 100644

index 0000000000000000000000000000000000000000..74a733eb81e8e3e3b7c6ca1c08de8856d6cfb81e

--- /dev/null

+++ b/data/xtuner/.github/workflows/lint.yml

@@ -0,0 +1,23 @@

+name: lint

+

+on: [push, pull_request]

+

+concurrency:

+ group: ${{ github.workflow }}-${{ github.ref }}

+ cancel-in-progress: true

+

+jobs:

+ lint:

+ runs-on: ubuntu-latest

+ steps:

+ - uses: actions/checkout@v2

+ - name: Set up Python 3.8

+ uses: actions/setup-python@v2

+ with:

+ python-version: 3.8

+ - name: Install pre-commit hook

+ run: |

+ pip install pre-commit

+ pre-commit install

+ - name: Linting

+ run: pre-commit run --all-files

diff --git a/data/xtuner/.gitignore b/data/xtuner/.gitignore

new file mode 100644

index 0000000000000000000000000000000000000000..ffe3444b8cdb2ec3e6791d047d0593fcf9d20d41

--- /dev/null

+++ b/data/xtuner/.gitignore

@@ -0,0 +1,124 @@

+# Byte-compiled / optimized / DLL files

+__pycache__/

+*.py[cod]

+*$py.class

+

+# C extensions

+*.so

+

+# Distribution / packaging

+.Python

+build/

+develop-eggs/

+dist/

+downloads/

+eggs/

+.eggs/

+lib/

+lib64/

+parts/

+sdist/

+var/

+wheels/

+*.egg-info/

+.installed.cfg

+*.egg

+MANIFEST

+

+# PyInstaller

+# Usually these files are written by a python script from a template

+# before PyInstaller builds the exe, so as to inject date/other infos into it.

+*.manifest

+*.spec

+

+# Installer logs

+pip-log.txt

+pip-delete-this-directory.txt

+

+# Unit test / coverage reports

+htmlcov/

+.tox/

+.coverage

+.coverage.*

+.cache

+nosetests.xml

+coverage.xml

+*.cover

+.hypothesis/

+.pytest_cache/

+

+# Translations

+*.mo

+*.pot

+

+# Django stuff:

+*.log

+local_settings.py

+db.sqlite3

+

+# Flask stuff:

+instance/

+.webassets-cache

+

+# Scrapy stuff:

+.scrapy

+

+# Sphinx documentation

+docs/*/_build/

+

+# PyBuilder

+target/

+

+# Jupyter Notebook

+.ipynb_checkpoints

+

+# pyenv

+.python-version

+

+# celery beat schedule file

+celerybeat-schedule

+

+# SageMath parsed files

+*.sage.py

+

+# Environments

+.env

+.venv

+env/

+venv/

+ENV/

+env.bak/

+venv.bak/

+

+# Spyder project settings

+.spyderproject

+.spyproject

+

+# Rope project settings

+.ropeproject

+

+# mkdocs documentation

+/site

+

+# mypy

+.mypy_cache/

+

+# custom

+data/

+data

+.vscode

+.idea

+.DS_Store

+*.pkl

+*.pkl.json

+*.log.json

+work_dirs/

+

+# Pytorch

+*.pth

+*.py~

+*.sh~

+

+# srun

+*.out

+batchscript-*

diff --git a/data/xtuner/.owners.yml b/data/xtuner/.owners.yml

new file mode 100644

index 0000000000000000000000000000000000000000..996ae4c69c03821b2b79a1b7a4233988cf0623ee

--- /dev/null

+++ b/data/xtuner/.owners.yml

@@ -0,0 +1,8 @@

+assign:

+ issues: disabled

+ pull_requests: disabled

+ strategy:

+ random

+ # daily-shift-based

+ schedule:

+ '*/1 * * * *'

diff --git a/data/xtuner/.pre-commit-config-zh-cn.yaml b/data/xtuner/.pre-commit-config-zh-cn.yaml

new file mode 100644

index 0000000000000000000000000000000000000000..4b9f51976e4b46db4db69952f437e43d72581070

--- /dev/null

+++ b/data/xtuner/.pre-commit-config-zh-cn.yaml

@@ -0,0 +1,51 @@

+exclude: ^tests/data/|^xtuner/tools/model_converters/modeling_internlm2_reward/

+repos:

+ - repo: https://gitee.com/openmmlab/mirrors-flake8

+ rev: 5.0.4

+ hooks:

+ - id: flake8

+ args: ["--exclude=xtuner/model/transformers_models/*"]

+ - repo: https://gitee.com/openmmlab/mirrors-isort

+ rev: 5.11.5

+ hooks:

+ - id: isort

+ - repo: https://gitee.com/openmmlab/mirrors-yapf

+ rev: v0.32.0

+ hooks:

+ - id: yapf

+ - repo: https://gitee.com/openmmlab/mirrors-pre-commit-hooks

+ rev: v4.3.0

+ hooks:

+ - id: trailing-whitespace

+ - id: check-yaml

+ - id: end-of-file-fixer

+ - id: requirements-txt-fixer

+ - id: double-quote-string-fixer

+ - id: check-merge-conflict

+ - id: fix-encoding-pragma

+ args: ["--remove"]

+ - id: mixed-line-ending

+ args: ["--fix=lf"]

+ - repo: https://gitee.com/openmmlab/mirrors-codespell

+ rev: v2.2.1

+ hooks:

+ - id: codespell

+ - repo: https://gitee.com/openmmlab/mirrors-mdformat

+ rev: 0.7.9

+ hooks:

+ - id: mdformat

+ args: ["--number"]

+ additional_dependencies:

+ - mdformat-openmmlab

+ - mdformat_frontmatter

+ - linkify-it-py

+ - repo: https://gitee.com/openmmlab/mirrors-docformatter

+ rev: v1.3.1

+ hooks:

+ - id: docformatter

+ args: ["--in-place", "--wrap-descriptions", "79"]

+ - repo: https://github.com/asottile/pyupgrade

+ rev: v3.0.0

+ hooks:

+ - id: pyupgrade

+ args: ["--py36-plus"]

diff --git a/data/xtuner/.pre-commit-config.yaml b/data/xtuner/.pre-commit-config.yaml

new file mode 100644

index 0000000000000000000000000000000000000000..f6bbfd6339aeba49dbae8a0edc425a6e3f0c8eb2

--- /dev/null

+++ b/data/xtuner/.pre-commit-config.yaml

@@ -0,0 +1,53 @@

+exclude: ^tests/data/|^xtuner/tools/model_converters/modeling_internlm2_reward/

+repos:

+ - repo: https://github.com/PyCQA/flake8

+ rev: 5.0.4

+ hooks:

+ - id: flake8

+ args: ["--exclude=xtuner/model/transformers_models/*"]

+ - repo: https://github.com/PyCQA/isort

+ rev: 5.11.5

+ hooks:

+ - id: isort

+ - repo: https://github.com/pre-commit/mirrors-yapf

+ rev: v0.32.0

+ hooks:

+ - id: yapf

+ exclude: 'xtuner/parallel/sequence/__init__.py'

+ - repo: https://github.com/pre-commit/pre-commit-hooks

+ rev: v4.3.0

+ hooks:

+ - id: trailing-whitespace

+ - id: check-yaml

+ - id: end-of-file-fixer

+ - id: requirements-txt-fixer

+ - id: double-quote-string-fixer

+ - id: check-merge-conflict

+ - id: fix-encoding-pragma

+ args: ["--remove"]

+ - id: mixed-line-ending

+ args: ["--fix=lf"]

+ - repo: https://github.com/codespell-project/codespell

+ rev: v2.2.1

+ hooks:

+ - id: codespell

+ - repo: https://github.com/executablebooks/mdformat

+ rev: 0.7.9

+ hooks:

+ - id: mdformat

+ args: ["--number"]

+ additional_dependencies:

+ - mdformat-openmmlab

+ - mdformat_frontmatter

+ - linkify-it-py

+ exclude: 'docs/zh_cn/user_guides/sequence_parallel.md'

+ - repo: https://github.com/myint/docformatter

+ rev: v1.3.1

+ hooks:

+ - id: docformatter

+ args: ["--in-place", "--wrap-descriptions", "79"]

+ - repo: https://github.com/asottile/pyupgrade

+ rev: v3.0.0

+ hooks:

+ - id: pyupgrade

+ args: ["--py36-plus"]

diff --git a/data/xtuner/LICENSE b/data/xtuner/LICENSE

new file mode 100644

index 0000000000000000000000000000000000000000..261eeb9e9f8b2b4b0d119366dda99c6fd7d35c64

--- /dev/null

+++ b/data/xtuner/LICENSE

@@ -0,0 +1,201 @@

+ Apache License

+ Version 2.0, January 2004

+ http://www.apache.org/licenses/

+

+ TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

+

+ 1. Definitions.

+

+ "License" shall mean the terms and conditions for use, reproduction,

+ and distribution as defined by Sections 1 through 9 of this document.

+

+ "Licensor" shall mean the copyright owner or entity authorized by

+ the copyright owner that is granting the License.

+

+ "Legal Entity" shall mean the union of the acting entity and all

+ other entities that control, are controlled by, or are under common

+ control with that entity. For the purposes of this definition,

+ "control" means (i) the power, direct or indirect, to cause the

+ direction or management of such entity, whether by contract or

+ otherwise, or (ii) ownership of fifty percent (50%) or more of the

+ outstanding shares, or (iii) beneficial ownership of such entity.

+

+ "You" (or "Your") shall mean an individual or Legal Entity

+ exercising permissions granted by this License.

+

+ "Source" form shall mean the preferred form for making modifications,

+ including but not limited to software source code, documentation

+ source, and configuration files.

+

+ "Object" form shall mean any form resulting from mechanical

+ transformation or translation of a Source form, including but

+ not limited to compiled object code, generated documentation,

+ and conversions to other media types.

+

+ "Work" shall mean the work of authorship, whether in Source or

+ Object form, made available under the License, as indicated by a

+ copyright notice that is included in or attached to the work

+ (an example is provided in the Appendix below).

+

+ "Derivative Works" shall mean any work, whether in Source or Object

+ form, that is based on (or derived from) the Work and for which the

+ editorial revisions, annotations, elaborations, or other modifications

+ represent, as a whole, an original work of authorship. For the purposes

+ of this License, Derivative Works shall not include works that remain

+ separable from, or merely link (or bind by name) to the interfaces of,

+ the Work and Derivative Works thereof.

+

+ "Contribution" shall mean any work of authorship, including

+ the original version of the Work and any modifications or additions

+ to that Work or Derivative Works thereof, that is intentionally

+ submitted to Licensor for inclusion in the Work by the copyright owner

+ or by an individual or Legal Entity authorized to submit on behalf of

+ the copyright owner. For the purposes of this definition, "submitted"

+ means any form of electronic, verbal, or written communication sent

+ to the Licensor or its representatives, including but not limited to

+ communication on electronic mailing lists, source code control systems,

+ and issue tracking systems that are managed by, or on behalf of, the

+ Licensor for the purpose of discussing and improving the Work, but

+ excluding communication that is conspicuously marked or otherwise

+ designated in writing by the copyright owner as "Not a Contribution."

+

+ "Contributor" shall mean Licensor and any individual or Legal Entity

+ on behalf of whom a Contribution has been received by Licensor and

+ subsequently incorporated within the Work.

+

+ 2. Grant of Copyright License. Subject to the terms and conditions of

+ this License, each Contributor hereby grants to You a perpetual,

+ worldwide, non-exclusive, no-charge, royalty-free, irrevocable

+ copyright license to reproduce, prepare Derivative Works of,

+ publicly display, publicly perform, sublicense, and distribute the

+ Work and such Derivative Works in Source or Object form.

+

+ 3. Grant of Patent License. Subject to the terms and conditions of

+ this License, each Contributor hereby grants to You a perpetual,

+ worldwide, non-exclusive, no-charge, royalty-free, irrevocable

+ (except as stated in this section) patent license to make, have made,

+ use, offer to sell, sell, import, and otherwise transfer the Work,

+ where such license applies only to those patent claims licensable

+ by such Contributor that are necessarily infringed by their

+ Contribution(s) alone or by combination of their Contribution(s)

+ with the Work to which such Contribution(s) was submitted. If You

+ institute patent litigation against any entity (including a

+ cross-claim or counterclaim in a lawsuit) alleging that the Work

+ or a Contribution incorporated within the Work constitutes direct

+ or contributory patent infringement, then any patent licenses

+ granted to You under this License for that Work shall terminate

+ as of the date such litigation is filed.

+

+ 4. Redistribution. You may reproduce and distribute copies of the

+ Work or Derivative Works thereof in any medium, with or without

+ modifications, and in Source or Object form, provided that You

+ meet the following conditions:

+

+ (a) You must give any other recipients of the Work or

+ Derivative Works a copy of this License; and

+

+ (b) You must cause any modified files to carry prominent notices

+ stating that You changed the files; and

+

+ (c) You must retain, in the Source form of any Derivative Works

+ that You distribute, all copyright, patent, trademark, and

+ attribution notices from the Source form of the Work,

+ excluding those notices that do not pertain to any part of

+ the Derivative Works; and

+

+ (d) If the Work includes a "NOTICE" text file as part of its

+ distribution, then any Derivative Works that You distribute must

+ include a readable copy of the attribution notices contained

+ within such NOTICE file, excluding those notices that do not

+ pertain to any part of the Derivative Works, in at least one

+ of the following places: within a NOTICE text file distributed

+ as part of the Derivative Works; within the Source form or

+ documentation, if provided along with the Derivative Works; or,

+ within a display generated by the Derivative Works, if and

+ wherever such third-party notices normally appear. The contents

+ of the NOTICE file are for informational purposes only and

+ do not modify the License. You may add Your own attribution

+ notices within Derivative Works that You distribute, alongside

+ or as an addendum to the NOTICE text from the Work, provided

+ that such additional attribution notices cannot be construed

+ as modifying the License.

+

+ You may add Your own copyright statement to Your modifications and

+ may provide additional or different license terms and conditions

+ for use, reproduction, or distribution of Your modifications, or

+ for any such Derivative Works as a whole, provided Your use,

+ reproduction, and distribution of the Work otherwise complies with

+ the conditions stated in this License.

+

+ 5. Submission of Contributions. Unless You explicitly state otherwise,

+ any Contribution intentionally submitted for inclusion in the Work

+ by You to the Licensor shall be under the terms and conditions of

+ this License, without any additional terms or conditions.

+ Notwithstanding the above, nothing herein shall supersede or modify

+ the terms of any separate license agreement you may have executed

+ with Licensor regarding such Contributions.

+

+ 6. Trademarks. This License does not grant permission to use the trade

+ names, trademarks, service marks, or product names of the Licensor,

+ except as required for reasonable and customary use in describing the

+ origin of the Work and reproducing the content of the NOTICE file.

+

+ 7. Disclaimer of Warranty. Unless required by applicable law or

+ agreed to in writing, Licensor provides the Work (and each

+ Contributor provides its Contributions) on an "AS IS" BASIS,

+ WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

+ implied, including, without limitation, any warranties or conditions

+ of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

+ PARTICULAR PURPOSE. You are solely responsible for determining the

+ appropriateness of using or redistributing the Work and assume any

+ risks associated with Your exercise of permissions under this License.

+

+ 8. Limitation of Liability. In no event and under no legal theory,

+ whether in tort (including negligence), contract, or otherwise,

+ unless required by applicable law (such as deliberate and grossly

+ negligent acts) or agreed to in writing, shall any Contributor be

+ liable to You for damages, including any direct, indirect, special,

+ incidental, or consequential damages of any character arising as a

+ result of this License or out of the use or inability to use the

+ Work (including but not limited to damages for loss of goodwill,

+ work stoppage, computer failure or malfunction, or any and all

+ other commercial damages or losses), even if such Contributor

+ has been advised of the possibility of such damages.

+

+ 9. Accepting Warranty or Additional Liability. While redistributing

+ the Work or Derivative Works thereof, You may choose to offer,

+ and charge a fee for, acceptance of support, warranty, indemnity,

+ or other liability obligations and/or rights consistent with this

+ License. However, in accepting such obligations, You may act only

+ on Your own behalf and on Your sole responsibility, not on behalf

+ of any other Contributor, and only if You agree to indemnify,

+ defend, and hold each Contributor harmless for any liability

+ incurred by, or claims asserted against, such Contributor by reason

+ of your accepting any such warranty or additional liability.

+

+ END OF TERMS AND CONDITIONS

+

+ APPENDIX: How to apply the Apache License to your work.

+

+ To apply the Apache License to your work, attach the following

+ boilerplate notice, with the fields enclosed by brackets "[]"

+ replaced with your own identifying information. (Don't include

+ the brackets!) The text should be enclosed in the appropriate

+ comment syntax for the file format. We also recommend that a

+ file or class name and description of purpose be included on the

+ same "printed page" as the copyright notice for easier

+ identification within third-party archives.

+

+ Copyright [yyyy] [name of copyright owner]

+

+ Licensed under the Apache License, Version 2.0 (the "License");

+ you may not use this file except in compliance with the License.

+ You may obtain a copy of the License at

+

+ http://www.apache.org/licenses/LICENSE-2.0

+

+ Unless required by applicable law or agreed to in writing, software

+ distributed under the License is distributed on an "AS IS" BASIS,

+ WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ See the License for the specific language governing permissions and

+ limitations under the License.

diff --git a/data/xtuner/MANIFEST.in b/data/xtuner/MANIFEST.in

new file mode 100644

index 0000000000000000000000000000000000000000..36e1610bf8093a8355a58d7d9779697a64931313

--- /dev/null

+++ b/data/xtuner/MANIFEST.in

@@ -0,0 +1,2 @@

+recursive-include xtuner/configs *.py *.yml *.json

+recursive-include xtuner/tools *.sh *.py

diff --git a/data/xtuner/README.md b/data/xtuner/README.md

new file mode 100644

index 0000000000000000000000000000000000000000..263d300c7a17778e3be4ff6f64cd262995f98527

--- /dev/null

+++ b/data/xtuner/README.md

@@ -0,0 +1,302 @@

+

+

+

+

+[](https://github.com/InternLM/xtuner/stargazers)

+[](https://github.com/InternLM/xtuner/blob/main/LICENSE)

+[](https://pypi.org/project/xtuner/)

+[](https://pypi.org/project/xtuner/)

+[](https://github.com/InternLM/xtuner/issues)

+[](https://github.com/InternLM/xtuner/issues)

+

+👋 join us on [](https://cdn.vansin.top/internlm/xtuner.jpg)

+[](https://twitter.com/intern_lm)

+[](https://discord.gg/xa29JuW87d)

+

+🔍 Explore our models on

+[](https://huggingface.co/xtuner)

+[](https://www.modelscope.cn/organization/xtuner)

+[](https://openxlab.org.cn/usercenter/xtuner)

+[](https://www.wisemodel.cn/organization/xtuner)

+

+English | [简体中文](README_zh-CN.md)

+

+

+

+

+

+

+

+

+|

+ Models

+ |

+

+ SFT Datasets

+ |

+

+ Data Pipelines

+ |

+

+ Algorithms

+ |

+

+

+|

+

+ |

+

+

+ |

+

+

+ |

+

+

+ |

+

+

+

+

+## 🛠️ Quick Start

+

+### Installation

+

+- It is recommended to build a Python-3.10 virtual environment using conda

+

+ ```bash

+ conda create --name xtuner-env python=3.10 -y

+ conda activate xtuner-env

+ ```

+

+- Install XTuner via pip

+

+ ```shell

+ pip install -U xtuner

+ ```

+

+ or with DeepSpeed integration

+

+ ```shell

+ pip install -U 'xtuner[deepspeed]'

+ ```

+

+- Install XTuner from source

+

+ ```shell

+ git clone https://github.com/InternLM/xtuner.git

+ cd xtuner

+ pip install -e '.[all]'

+ ```

+

+### Fine-tune

+

+XTuner supports the efficient fine-tune (*e.g.*, QLoRA) for LLMs. Dataset prepare guides can be found on [dataset_prepare.md](./docs/en/user_guides/dataset_prepare.md).

+

+- **Step 0**, prepare the config. XTuner provides many ready-to-use configs and we can view all configs by

+

+ ```shell

+ xtuner list-cfg

+ ```

+

+ Or, if the provided configs cannot meet the requirements, please copy the provided config to the specified directory and make specific modifications by

+