bubbliiiing

commited on

Commit

·

145f4aa

1

Parent(s):

b95884d

Update Weights

Browse files- .gitattributes +13 -0

- LICENSE.txt +201 -0

- README.md +249 -3

- README_en.md +249 -0

- Wan2.1_VAE.pth +3 -0

- config.json +29 -0

- configuration.json +1 -0

- models_clip_open-clip-xlm-roberta-large-vit-huge-14.pth +3 -0

- models_t5_umt5-xxl-enc-bf16.pth +3 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,16 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

google/umt5-xxl/tokenizer.json filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

xlm-roberta-large/tokenizer.json filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

assets/comp_effic.png filter=lfs diff=lfs merge=lfs -text

|

| 39 |

+

assets/data_for_diff_stage.jpg filter=lfs diff=lfs merge=lfs -text

|

| 40 |

+

assets/i2v_res.png filter=lfs diff=lfs merge=lfs -text

|

| 41 |

+

assets/logo.png filter=lfs diff=lfs merge=lfs -text

|

| 42 |

+

assets/t2v_res.jpg filter=lfs diff=lfs merge=lfs -text

|

| 43 |

+

assets/vben_vs_sota.png filter=lfs diff=lfs merge=lfs -text

|

| 44 |

+

assets/vben_vs_sota_t2i.jpg filter=lfs diff=lfs merge=lfs -text

|

| 45 |

+

assets/video_dit_arch.jpg filter=lfs diff=lfs merge=lfs -text

|

| 46 |

+

assets/video_vae_res.jpg filter=lfs diff=lfs merge=lfs -text

|

| 47 |

+

examples/i2v_input.JPG filter=lfs diff=lfs merge=lfs -text

|

| 48 |

+

assets/vben_1.3b_vs_sota.png filter=lfs diff=lfs merge=lfs -text

|

LICENSE.txt

ADDED

|

@@ -0,0 +1,201 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Apache License

|

| 2 |

+

Version 2.0, January 2004

|

| 3 |

+

http://www.apache.org/licenses/

|

| 4 |

+

|

| 5 |

+

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

|

| 6 |

+

|

| 7 |

+

1. Definitions.

|

| 8 |

+

|

| 9 |

+

"License" shall mean the terms and conditions for use, reproduction,

|

| 10 |

+

and distribution as defined by Sections 1 through 9 of this document.

|

| 11 |

+

|

| 12 |

+

"Licensor" shall mean the copyright owner or entity authorized by

|

| 13 |

+

the copyright owner that is granting the License.

|

| 14 |

+

|

| 15 |

+

"Legal Entity" shall mean the union of the acting entity and all

|

| 16 |

+

other entities that control, are controlled by, or are under common

|

| 17 |

+

control with that entity. For the purposes of this definition,

|

| 18 |

+

"control" means (i) the power, direct or indirect, to cause the

|

| 19 |

+

direction or management of such entity, whether by contract or

|

| 20 |

+

otherwise, or (ii) ownership of fifty percent (50%) or more of the

|

| 21 |

+

outstanding shares, or (iii) beneficial ownership of such entity.

|

| 22 |

+

|

| 23 |

+

"You" (or "Your") shall mean an individual or Legal Entity

|

| 24 |

+

exercising permissions granted by this License.

|

| 25 |

+

|

| 26 |

+

"Source" form shall mean the preferred form for making modifications,

|

| 27 |

+

including but not limited to software source code, documentation

|

| 28 |

+

source, and configuration files.

|

| 29 |

+

|

| 30 |

+

"Object" form shall mean any form resulting from mechanical

|

| 31 |

+

transformation or translation of a Source form, including but

|

| 32 |

+

not limited to compiled object code, generated documentation,

|

| 33 |

+

and conversions to other media types.

|

| 34 |

+

|

| 35 |

+

"Work" shall mean the work of authorship, whether in Source or

|

| 36 |

+

Object form, made available under the License, as indicated by a

|

| 37 |

+

copyright notice that is included in or attached to the work

|

| 38 |

+

(an example is provided in the Appendix below).

|

| 39 |

+

|

| 40 |

+

"Derivative Works" shall mean any work, whether in Source or Object

|

| 41 |

+

form, that is based on (or derived from) the Work and for which the

|

| 42 |

+

editorial revisions, annotations, elaborations, or other modifications

|

| 43 |

+

represent, as a whole, an original work of authorship. For the purposes

|

| 44 |

+

of this License, Derivative Works shall not include works that remain

|

| 45 |

+

separable from, or merely link (or bind by name) to the interfaces of,

|

| 46 |

+

the Work and Derivative Works thereof.

|

| 47 |

+

|

| 48 |

+

"Contribution" shall mean any work of authorship, including

|

| 49 |

+

the original version of the Work and any modifications or additions

|

| 50 |

+

to that Work or Derivative Works thereof, that is intentionally

|

| 51 |

+

submitted to Licensor for inclusion in the Work by the copyright owner

|

| 52 |

+

or by an individual or Legal Entity authorized to submit on behalf of

|

| 53 |

+

the copyright owner. For the purposes of this definition, "submitted"

|

| 54 |

+

means any form of electronic, verbal, or written communication sent

|

| 55 |

+

to the Licensor or its representatives, including but not limited to

|

| 56 |

+

communication on electronic mailing lists, source code control systems,

|

| 57 |

+

and issue tracking systems that are managed by, or on behalf of, the

|

| 58 |

+

Licensor for the purpose of discussing and improving the Work, but

|

| 59 |

+

excluding communication that is conspicuously marked or otherwise

|

| 60 |

+

designated in writing by the copyright owner as "Not a Contribution."

|

| 61 |

+

|

| 62 |

+

"Contributor" shall mean Licensor and any individual or Legal Entity

|

| 63 |

+

on behalf of whom a Contribution has been received by Licensor and

|

| 64 |

+

subsequently incorporated within the Work.

|

| 65 |

+

|

| 66 |

+

2. Grant of Copyright License. Subject to the terms and conditions of

|

| 67 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 68 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 69 |

+

copyright license to reproduce, prepare Derivative Works of,

|

| 70 |

+

publicly display, publicly perform, sublicense, and distribute the

|

| 71 |

+

Work and such Derivative Works in Source or Object form.

|

| 72 |

+

|

| 73 |

+

3. Grant of Patent License. Subject to the terms and conditions of

|

| 74 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 75 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 76 |

+

(except as stated in this section) patent license to make, have made,

|

| 77 |

+

use, offer to sell, sell, import, and otherwise transfer the Work,

|

| 78 |

+

where such license applies only to those patent claims licensable

|

| 79 |

+

by such Contributor that are necessarily infringed by their

|

| 80 |

+

Contribution(s) alone or by combination of their Contribution(s)

|

| 81 |

+

with the Work to which such Contribution(s) was submitted. If You

|

| 82 |

+

institute patent litigation against any entity (including a

|

| 83 |

+

cross-claim or counterclaim in a lawsuit) alleging that the Work

|

| 84 |

+

or a Contribution incorporated within the Work constitutes direct

|

| 85 |

+

or contributory patent infringement, then any patent licenses

|

| 86 |

+

granted to You under this License for that Work shall terminate

|

| 87 |

+

as of the date such litigation is filed.

|

| 88 |

+

|

| 89 |

+

4. Redistribution. You may reproduce and distribute copies of the

|

| 90 |

+

Work or Derivative Works thereof in any medium, with or without

|

| 91 |

+

modifications, and in Source or Object form, provided that You

|

| 92 |

+

meet the following conditions:

|

| 93 |

+

|

| 94 |

+

(a) You must give any other recipients of the Work or

|

| 95 |

+

Derivative Works a copy of this License; and

|

| 96 |

+

|

| 97 |

+

(b) You must cause any modified files to carry prominent notices

|

| 98 |

+

stating that You changed the files; and

|

| 99 |

+

|

| 100 |

+

(c) You must retain, in the Source form of any Derivative Works

|

| 101 |

+

that You distribute, all copyright, patent, trademark, and

|

| 102 |

+

attribution notices from the Source form of the Work,

|

| 103 |

+

excluding those notices that do not pertain to any part of

|

| 104 |

+

the Derivative Works; and

|

| 105 |

+

|

| 106 |

+

(d) If the Work includes a "NOTICE" text file as part of its

|

| 107 |

+

distribution, then any Derivative Works that You distribute must

|

| 108 |

+

include a readable copy of the attribution notices contained

|

| 109 |

+

within such NOTICE file, excluding those notices that do not

|

| 110 |

+

pertain to any part of the Derivative Works, in at least one

|

| 111 |

+

of the following places: within a NOTICE text file distributed

|

| 112 |

+

as part of the Derivative Works; within the Source form or

|

| 113 |

+

documentation, if provided along with the Derivative Works; or,

|

| 114 |

+

within a display generated by the Derivative Works, if and

|

| 115 |

+

wherever such third-party notices normally appear. The contents

|

| 116 |

+

of the NOTICE file are for informational purposes only and

|

| 117 |

+

do not modify the License. You may add Your own attribution

|

| 118 |

+

notices within Derivative Works that You distribute, alongside

|

| 119 |

+

or as an addendum to the NOTICE text from the Work, provided

|

| 120 |

+

that such additional attribution notices cannot be construed

|

| 121 |

+

as modifying the License.

|

| 122 |

+

|

| 123 |

+

You may add Your own copyright statement to Your modifications and

|

| 124 |

+

may provide additional or different license terms and conditions

|

| 125 |

+

for use, reproduction, or distribution of Your modifications, or

|

| 126 |

+

for any such Derivative Works as a whole, provided Your use,

|

| 127 |

+

reproduction, and distribution of the Work otherwise complies with

|

| 128 |

+

the conditions stated in this License.

|

| 129 |

+

|

| 130 |

+

5. Submission of Contributions. Unless You explicitly state otherwise,

|

| 131 |

+

any Contribution intentionally submitted for inclusion in the Work

|

| 132 |

+

by You to the Licensor shall be under the terms and conditions of

|

| 133 |

+

this License, without any additional terms or conditions.

|

| 134 |

+

Notwithstanding the above, nothing herein shall supersede or modify

|

| 135 |

+

the terms of any separate license agreement you may have executed

|

| 136 |

+

with Licensor regarding such Contributions.

|

| 137 |

+

|

| 138 |

+

6. Trademarks. This License does not grant permission to use the trade

|

| 139 |

+

names, trademarks, service marks, or product names of the Licensor,

|

| 140 |

+

except as required for reasonable and customary use in describing the

|

| 141 |

+

origin of the Work and reproducing the content of the NOTICE file.

|

| 142 |

+

|

| 143 |

+

7. Disclaimer of Warranty. Unless required by applicable law or

|

| 144 |

+

agreed to in writing, Licensor provides the Work (and each

|

| 145 |

+

Contributor provides its Contributions) on an "AS IS" BASIS,

|

| 146 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

|

| 147 |

+

implied, including, without limitation, any warranties or conditions

|

| 148 |

+

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

|

| 149 |

+

PARTICULAR PURPOSE. You are solely responsible for determining the

|

| 150 |

+

appropriateness of using or redistributing the Work and assume any

|

| 151 |

+

risks associated with Your exercise of permissions under this License.

|

| 152 |

+

|

| 153 |

+

8. Limitation of Liability. In no event and under no legal theory,

|

| 154 |

+

whether in tort (including negligence), contract, or otherwise,

|

| 155 |

+

unless required by applicable law (such as deliberate and grossly

|

| 156 |

+

negligent acts) or agreed to in writing, shall any Contributor be

|

| 157 |

+

liable to You for damages, including any direct, indirect, special,

|

| 158 |

+

incidental, or consequential damages of any character arising as a

|

| 159 |

+

result of this License or out of the use or inability to use the

|

| 160 |

+

Work (including but not limited to damages for loss of goodwill,

|

| 161 |

+

work stoppage, computer failure or malfunction, or any and all

|

| 162 |

+

other commercial damages or losses), even if such Contributor

|

| 163 |

+

has been advised of the possibility of such damages.

|

| 164 |

+

|

| 165 |

+

9. Accepting Warranty or Additional Liability. While redistributing

|

| 166 |

+

the Work or Derivative Works thereof, You may choose to offer,

|

| 167 |

+

and charge a fee for, acceptance of support, warranty, indemnity,

|

| 168 |

+

or other liability obligations and/or rights consistent with this

|

| 169 |

+

License. However, in accepting such obligations, You may act only

|

| 170 |

+

on Your own behalf and on Your sole responsibility, not on behalf

|

| 171 |

+

of any other Contributor, and only if You agree to indemnify,

|

| 172 |

+

defend, and hold each Contributor harmless for any liability

|

| 173 |

+

incurred by, or claims asserted against, such Contributor by reason

|

| 174 |

+

of your accepting any such warranty or additional liability.

|

| 175 |

+

|

| 176 |

+

END OF TERMS AND CONDITIONS

|

| 177 |

+

|

| 178 |

+

APPENDIX: How to apply the Apache License to your work.

|

| 179 |

+

|

| 180 |

+

To apply the Apache License to your work, attach the following

|

| 181 |

+

boilerplate notice, with the fields enclosed by brackets "[]"

|

| 182 |

+

replaced with your own identifying information. (Don't include

|

| 183 |

+

the brackets!) The text should be enclosed in the appropriate

|

| 184 |

+

comment syntax for the file format. We also recommend that a

|

| 185 |

+

file or class name and description of purpose be included on the

|

| 186 |

+

same "printed page" as the copyright notice for easier

|

| 187 |

+

identification within third-party archives.

|

| 188 |

+

|

| 189 |

+

Copyright [yyyy] [name of copyright owner]

|

| 190 |

+

|

| 191 |

+

Licensed under the Apache License, Version 2.0 (the "License");

|

| 192 |

+

you may not use this file except in compliance with the License.

|

| 193 |

+

You may obtain a copy of the License at

|

| 194 |

+

|

| 195 |

+

http://www.apache.org/licenses/LICENSE-2.0

|

| 196 |

+

|

| 197 |

+

Unless required by applicable law or agreed to in writing, software

|

| 198 |

+

distributed under the License is distributed on an "AS IS" BASIS,

|

| 199 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 200 |

+

See the License for the specific language governing permissions and

|

| 201 |

+

limitations under the License.

|

README.md

CHANGED

|

@@ -1,3 +1,249 @@

|

|

| 1 |

-

---

|

| 2 |

-

license: apache-2.0

|

| 3 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: apache-2.0

|

| 3 |

+

language:

|

| 4 |

+

- en

|

| 5 |

+

- zh

|

| 6 |

+

pipeline_tag: text-to-video

|

| 7 |

+

library_name: diffusers

|

| 8 |

+

tags:

|

| 9 |

+

- video

|

| 10 |

+

- video-generation

|

| 11 |

+

---

|

| 12 |

+

|

| 13 |

+

# Wan-Fun

|

| 14 |

+

|

| 15 |

+

😊 Welcome!

|

| 16 |

+

|

| 17 |

+

[](https://huggingface.co/spaces/alibaba-pai/Wan2.1-Fun-1.3B-InP)

|

| 18 |

+

|

| 19 |

+

[](https://github.com/aigc-apps/VideoX-Fun)

|

| 20 |

+

|

| 21 |

+

[English](./README_en.md) | [简体中文](./README.md)

|

| 22 |

+

|

| 23 |

+

# 目录

|

| 24 |

+

- [目录](#目录)

|

| 25 |

+

- [模型地址](#模型地址)

|

| 26 |

+

- [视频作品](#视频作品)

|

| 27 |

+

- [快速启动](#快速启动)

|

| 28 |

+

- [如何使用](#如何使用)

|

| 29 |

+

- [参考文献](#参考文献)

|

| 30 |

+

- [许可证](#许可证)

|

| 31 |

+

|

| 32 |

+

# 模型地址

|

| 33 |

+

V1.0:

|

| 34 |

+

| 名称 | 存储空间 | Hugging Face | Model Scope | 描述 |

|

| 35 |

+

|--|--|--|--|--|

|

| 36 |

+

| Wan2.1-Fun-1.3B-InP | 19.0 GB | [🤗Link](https://huggingface.co/alibaba-pai/Wan2.1-Fun-1.3B-InP) | [😄Link](https://modelscope.cn/models/PAI/Wan2.1-Fun-1.3B-InP) | Wan2.1-Fun-1.3B文图生视频权重,以多分辨率训练,支持首尾图预测。 |

|

| 37 |

+

| Wan2.1-Fun-14B-InP | 47.0 GB | [🤗Link](https://huggingface.co/alibaba-pai/Wan2.1-Fun-14B-InP) | [😄Link](https://modelscope.cn/models/PAI/Wan2.1-Fun-14B-InP) | Wan2.1-Fun-14B文图生视频权重,以多分辨率训练,支持首尾图预测。 |

|

| 38 |

+

| Wan2.1-Fun-1.3B-Control | 19.0 GB | [🤗Link](https://huggingface.co/alibaba-pai/Wan2.1-Fun-1.3B-Control) | [😄Link](https://modelscope.cn/models/PAI/Wan2.1-Fun-1.3B-Control)| Wan2.1-Fun-1.3B视频控制权重,支持不同的控制条件,如Canny、Depth、Pose、MLSD等,同时支持使用轨迹控制。支持多分辨率(512,768,1024)的视频预测,支持多分辨率(512,768,1024)的视频预测,以81帧、每秒16帧进行训练,支持多语言预测 |

|

| 39 |

+

| Wan2.1-Fun-14B-Control | 47.0 GB | [🤗Link](https://huggingface.co/alibaba-pai/Wan2.1-Fun-14B-Control) | [😄Link](https://modelscope.cn/models/PAI/Wan2.1-Fun-14B-Control)| Wan2.1-Fun-14B视频控制权重,支持不同的控制条件,如Canny、Depth、Pose、MLSD等,同时支持使用轨迹控制。支持多分辨率(512,768,1024)的视频预测,支持多分辨率(512,768,1024)的视频预测,以81帧、每秒16帧进行训练,支持多语言预测 |

|

| 40 |

+

|

| 41 |

+

# 视频作品

|

| 42 |

+

|

| 43 |

+

### Wan2.1-Fun-14B-InP && Wan2.1-Fun-1.3B-InP

|

| 44 |

+

|

| 45 |

+

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 46 |

+

<tr>

|

| 47 |

+

<td>

|

| 48 |

+

<video src="https://github.com/user-attachments/assets/bd72a276-e60e-4b5d-86c1-d0f67e7425b9" width="100%" controls autoplay loop></video>

|

| 49 |

+

</td>

|

| 50 |

+

<td>

|

| 51 |

+

<video src="https://github.com/user-attachments/assets/cb7aef09-52c2-4973-80b4-b2fb63425044" width="100%" controls autoplay loop></video>

|

| 52 |

+

</td>

|

| 53 |

+

<td>

|

| 54 |

+

<video src="https://github.com/user-attachments/assets/4e10d491-f1cf-4b08-a7c5-1e01e5418140" width="100%" controls autoplay loop></video>

|

| 55 |

+

</td>

|

| 56 |

+

<td>

|

| 57 |

+

<video src="https://github.com/user-attachments/assets/f7e363a9-be09-4b72-bccf-cce9c9ebeb9b" width="100%" controls autoplay loop></video>

|

| 58 |

+

</td>

|

| 59 |

+

</tr>

|

| 60 |

+

</table>

|

| 61 |

+

|

| 62 |

+

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 63 |

+

<tr>

|

| 64 |

+

<td>

|

| 65 |

+

<video src="https://github.com/user-attachments/assets/28f3e720-8acc-4f22-a5d0-ec1c571e9466" width="100%" controls autoplay loop></video>

|

| 66 |

+

</td>

|

| 67 |

+

<td>

|

| 68 |

+

<video src="https://github.com/user-attachments/assets/fb6e4cb9-270d-47cd-8501-caf8f3e91b5c" width="100%" controls autoplay loop></video>

|

| 69 |

+

</td>

|

| 70 |

+

<td>

|

| 71 |

+

<video src="https://github.com/user-attachments/assets/989a4644-e33b-4f0c-b68e-2ff6ba37ac7e" width="100%" controls autoplay loop></video>

|

| 72 |

+

</td>

|

| 73 |

+

<td>

|

| 74 |

+

<video src="https://github.com/user-attachments/assets/9c604fa7-8657-49d1-8066-b5bb198b28b6" width="100%" controls autoplay loop></video>

|

| 75 |

+

</td>

|

| 76 |

+

</tr>

|

| 77 |

+

</table>

|

| 78 |

+

|

| 79 |

+

### Wan2.1-Fun-14B-Control && Wan2.1-Fun-1.3B-Control

|

| 80 |

+

|

| 81 |

+

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 82 |

+

<tr>

|

| 83 |

+

<td>

|

| 84 |

+

<video src="https://github.com/user-attachments/assets/f35602c4-9f0a-4105-9762-1e3a88abbac6" width="100%" controls autoplay loop></video>

|

| 85 |

+

</td>

|

| 86 |

+

<td>

|

| 87 |

+

<video src="https://github.com/user-attachments/assets/8b0f0e87-f1be-4915-bb35-2d53c852333e" width="100%" controls autoplay loop></video>

|

| 88 |

+

</td>

|

| 89 |

+

<td>

|

| 90 |

+

<video src="https://github.com/user-attachments/assets/972012c1-772b-427a-bce6-ba8b39edcfad" width="100%" controls autoplay loop></video>

|

| 91 |

+

</td>

|

| 92 |

+

<tr>

|

| 93 |

+

</table>

|

| 94 |

+

|

| 95 |

+

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 96 |

+

<tr>

|

| 97 |

+

<td>

|

| 98 |

+

<video src="https://github.com/user-attachments/assets/53002ce2-dd18-4d4f-8135-b6f68364cabd" width="100%" controls autoplay loop></video>

|

| 99 |

+

</td>

|

| 100 |

+

<td>

|

| 101 |

+

<video src="https://github.com/user-attachments/assets/a1a07cf8-d86d-4cd2-831f-18a6c1ceee1d" width="100%" controls autoplay loop></video>

|

| 102 |

+

</td>

|

| 103 |

+

<td>

|

| 104 |

+

<video src="https://github.com/user-attachments/assets/3224804f-342d-4947-918d-d9fec8e3d273" width="100%" controls autoplay loop></video>

|

| 105 |

+

</td>

|

| 106 |

+

<tr>

|

| 107 |

+

<td>

|

| 108 |

+

<video src="https://github.com/user-attachments/assets/c6c5d557-9772-483e-ae47-863d8a26db4a" width="100%" controls autoplay loop></video>

|

| 109 |

+

</td>

|

| 110 |

+

<td>

|

| 111 |

+

<video src="https://github.com/user-attachments/assets/af617971-597c-4be4-beb5-f9e8aaca2d14" width="100%" controls autoplay loop></video>

|

| 112 |

+

</td>

|

| 113 |

+

<td>

|

| 114 |

+

<video src="https://github.com/user-attachments/assets/8411151e-f491-4264-8368-7fc3c5a6992b" width="100%" controls autoplay loop></video>

|

| 115 |

+

</td>

|

| 116 |

+

</tr>

|

| 117 |

+

</table>

|

| 118 |

+

|

| 119 |

+

# 快速启动

|

| 120 |

+

### 1. 云使用: AliyunDSW/Docker

|

| 121 |

+

#### a. 通过阿里云 DSW

|

| 122 |

+

DSW 有免费 GPU 时间,用户可申请一次,申请后3个月内有效。

|

| 123 |

+

|

| 124 |

+

阿里云在[Freetier](https://free.aliyun.com/?product=9602825&crowd=enterprise&spm=5176.28055625.J_5831864660.1.e939154aRgha4e&scm=20140722.M_9974135.P_110.MO_1806-ID_9974135-MID_9974135-CID_30683-ST_8512-V_1)提供免费GPU时间,获取并在阿里云PAI-DSW中使用,5分钟内即可启动CogVideoX-Fun。

|

| 125 |

+

|

| 126 |

+

[](https://gallery.pai-ml.com/#/preview/deepLearning/cv/cogvideox_fun)

|

| 127 |

+

|

| 128 |

+

#### b. 通过ComfyUI

|

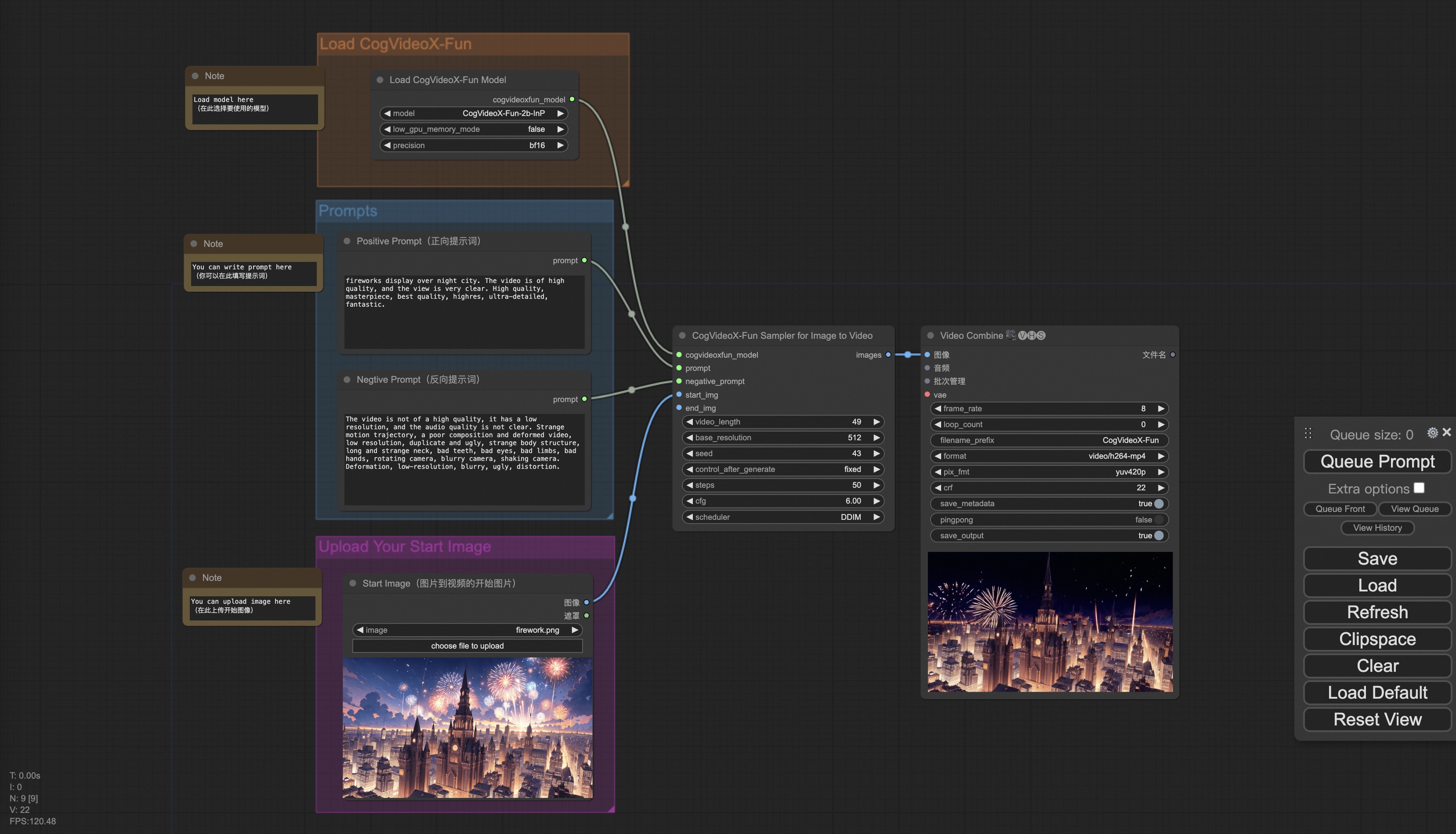

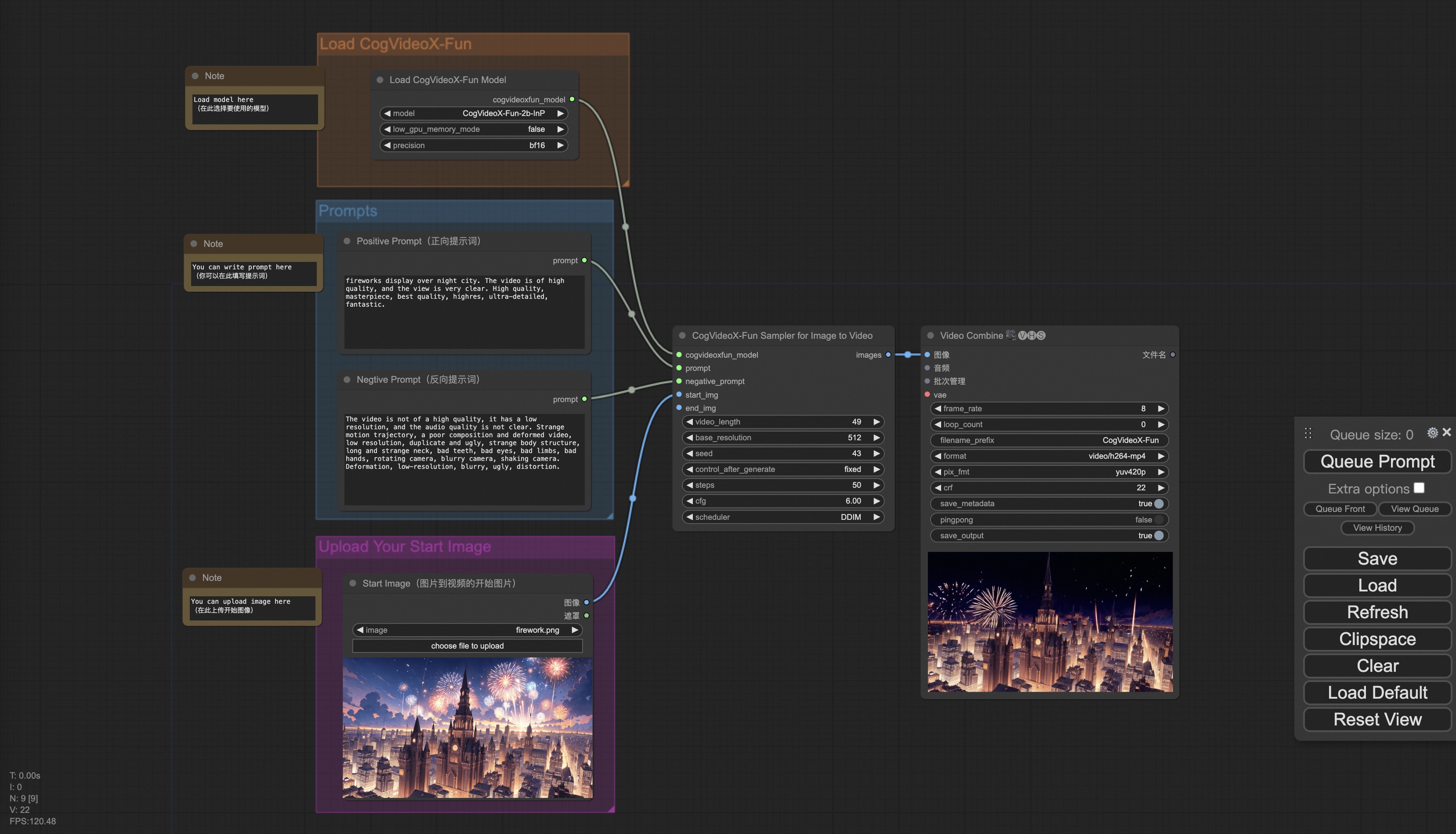

| 129 |

+

我们的ComfyUI界面如下,具体查看[ComfyUI README](comfyui/README.md)。

|

| 130 |

+

|

| 131 |

+

|

| 132 |

+

#### c. 通过docker

|

| 133 |

+

使用docker的情况下,请保证机器中已经正确安装显卡驱动与CUDA环境,然后以此执行以下命令:

|

| 134 |

+

|

| 135 |

+

```

|

| 136 |

+

# pull image

|

| 137 |

+

docker pull mybigpai-public-registry.cn-beijing.cr.aliyuncs.com/easycv/torch_cuda:cogvideox_fun

|

| 138 |

+

|

| 139 |

+

# enter image

|

| 140 |

+

docker run -it -p 7860:7860 --network host --gpus all --security-opt seccomp:unconfined --shm-size 200g mybigpai-public-registry.cn-beijing.cr.aliyuncs.com/easycv/torch_cuda:cogvideox_fun

|

| 141 |

+

|

| 142 |

+

# clone code

|

| 143 |

+

git clone https://github.com/aigc-apps/CogVideoX-Fun.git

|

| 144 |

+

|

| 145 |

+

# enter CogVideoX-Fun's dir

|

| 146 |

+

cd CogVideoX-Fun

|

| 147 |

+

|

| 148 |

+

# download weights

|

| 149 |

+

mkdir models/Diffusion_Transformer

|

| 150 |

+

mkdir models/Personalized_Model

|

| 151 |

+

|

| 152 |

+

# Please use the hugginface link or modelscope link to download the model.

|

| 153 |

+

# CogVideoX-Fun

|

| 154 |

+

# https://huggingface.co/alibaba-pai/CogVideoX-Fun-V1.1-5b-InP

|

| 155 |

+

# https://modelscope.cn/models/PAI/CogVideoX-Fun-V1.1-5b-InP

|

| 156 |

+

|

| 157 |

+

# Wan

|

| 158 |

+

# https://huggingface.co/alibaba-pai/Wan2.1-Fun-14B-InP

|

| 159 |

+

# https://modelscope.cn/models/PAI/Wan2.1-Fun-14B-InP

|

| 160 |

+

```

|

| 161 |

+

|

| 162 |

+

### 2. 本地安装: 环境检查/下载/安装

|

| 163 |

+

#### a. 环境检查

|

| 164 |

+

我们已验证该库可在以下环境中执行:

|

| 165 |

+

|

| 166 |

+

Windows 的详细信息:

|

| 167 |

+

- 操作系统 Windows 10

|

| 168 |

+

- python: python3.10 & python3.11

|

| 169 |

+

- pytorch: torch2.2.0

|

| 170 |

+

- CUDA: 11.8 & 12.1

|

| 171 |

+

- CUDNN: 8+

|

| 172 |

+

- GPU: Nvidia-3060 12G & Nvidia-3090 24G

|

| 173 |

+

|

| 174 |

+

Linux 的详细信息:

|

| 175 |

+

- 操作系统 Ubuntu 20.04, CentOS

|

| 176 |

+

- python: python3.10 & python3.11

|

| 177 |

+

- pytorch: torch2.2.0

|

| 178 |

+

- CUDA: 11.8 & 12.1

|

| 179 |

+

- CUDNN: 8+

|

| 180 |

+

- GPU:Nvidia-V100 16G & Nvidia-A10 24G & Nvidia-A100 40G & Nvidia-A100 80G

|

| 181 |

+

|

| 182 |

+

我们需要大约 60GB 的可用磁盘空间,请检查!

|

| 183 |

+

|

| 184 |

+

#### b. 权重放置

|

| 185 |

+

我们最好将[权重](#model-zoo)按照指定路径进行放置:

|

| 186 |

+

|

| 187 |

+

```

|

| 188 |

+

📦 models/

|

| 189 |

+

├── 📂 Diffusion_Transformer/

|

| 190 |

+

│ ├── 📂 CogVideoX-Fun-V1.1-2b-InP/

|

| 191 |

+

│ ├── 📂 CogVideoX-Fun-V1.1-5b-InP/

|

| 192 |

+

│ ├── 📂 Wan2.1-Fun-14B-InP

|

| 193 |

+

│ └── 📂 Wan2.1-Fun-1.3B-InP/

|

| 194 |

+

├── 📂 Personalized_Model/

|

| 195 |

+

│ └── your trained trainformer model / your trained lora model (for UI load)

|

| 196 |

+

```

|

| 197 |

+

|

| 198 |

+

# 如何使用

|

| 199 |

+

|

| 200 |

+

<h3 id="video-gen">1. 生成 </h3>

|

| 201 |

+

|

| 202 |

+

#### a、显存节省方案

|

| 203 |

+

由于Wan2.1的参数非常大,我们需要考虑显存节省方案,以节省显存适应消费级显卡。我们给每个预测文件都提供了GPU_memory_mode,可以在model_cpu_offload,model_cpu_offload_and_qfloat8,sequential_cpu_offload中进行选择。该方案同样适用于CogVideoX-Fun的生成。

|

| 204 |

+

|

| 205 |

+

- model_cpu_offload代表整个模型在使用后会进入cpu,可以节省部分显存。

|

| 206 |

+

- model_cpu_offload_and_qfloat8代表整个模型在使用后会进入cpu,并且对transformer模型进行了float8的量化,可以节省更多的显存。

|

| 207 |

+

- sequential_cpu_offload代表模型的每一层在使用后会进入cpu,速度较慢,节省大量显存。

|

| 208 |

+

|

| 209 |

+

qfloat8会部分降低模型的性能,但可以节省更多的显存。如果显存足够,推荐使用model_cpu_offload。

|

| 210 |

+

|

| 211 |

+

#### b、通过comfyui

|

| 212 |

+

具体查看[ComfyUI README](comfyui/README.md)。

|

| 213 |

+

|

| 214 |

+

#### c、运行python文件

|

| 215 |

+

- 步骤1:下载对应[权重](#model-zoo)放入models文件夹。

|

| 216 |

+

- 步骤2:根据不同的权重与预测目标使用不同的文件进行预测。当前该库支持CogVideoX-Fun、Wan2.1和Wan2.1-Fun,在examples文件夹下用文件夹名以区分,不同模型支持的功能不同,请视具体情况予以区分。以CogVideoX-Fun为例。

|

| 217 |

+

- 文生视频:

|

| 218 |

+

- 使用examples/cogvideox_fun/predict_t2v.py文件中修改prompt、neg_prompt、guidance_scale和seed。

|

| 219 |

+

- 而后运行examples/cogvideox_fun/predict_t2v.py文件,等待生成结果,结果保存在samples/cogvideox-fun-videos文件夹中。

|

| 220 |

+

- 图生视频:

|

| 221 |

+

- 使用examples/cogvideox_fun/predict_i2v.py文件中修改validation_image_start、validation_image_end、prompt、neg_prompt、guidance_scale和seed。

|

| 222 |

+

- validation_image_start是视频的开始图片,validation_image_end是视频的结尾图片。

|

| 223 |

+

- 而后运行examples/cogvideox_fun/predict_i2v.py文件,等待生成结果,结果保存在samples/cogvideox-fun-videos_i2v文件夹中。

|

| 224 |

+

- 视频生视频:

|

| 225 |

+

- 使用examples/cogvideox_fun/predict_v2v.py文件中修改validation_video、validation_image_end、prompt、neg_prompt、guidance_scale和seed。

|

| 226 |

+

- validation_video是视频生视频的参考视频。您可以使用以下视频运行演示:[演示视频](https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/cogvideox_fun/asset/v1/play_guitar.mp4)

|

| 227 |

+

- 而后运行examples/cogvideox_fun/predict_v2v.py文件,等待生成结果,结果保存在samples/cogvideox-fun-videos_v2v文件夹中。

|

| 228 |

+

- 普通控制生视频(Canny、Pose、Depth等):

|

| 229 |

+

- 使用examples/cogvideox_fun/predict_v2v_control.py文件中修改control_video、validation_image_end、prompt、neg_prompt、guidance_scale和seed。

|

| 230 |

+

- control_video是控制生视频的控制视频,是使用Canny、Pose、Depth等算子提取后的视频。您可以使用以下视频运行演示:[演示视频](https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/cogvideox_fun/asset/v1.1/pose.mp4)

|

| 231 |

+

- 而后运行examples/cogvideox_fun/predict_v2v_control.py文件,等待生成结果,结果保存在samples/cogvideox-fun-videos_v2v_control文件夹中。

|

| 232 |

+

- 步骤3:如果想结合自己训练的其他backbone与Lora,则看情况修改examples/{model_name}/predict_t2v.py中的examples/{model_name}/predict_i2v.py和lora_path。

|

| 233 |

+

|

| 234 |

+

#### d、通过ui界面

|

| 235 |

+

|

| 236 |

+

webui支持文生视频、图生视频、视频生视频和普通控制生视频(Canny、Pose、Depth等)。当前该库支持CogVideoX-Fun、Wan2.1和Wan2.1-Fun,在examples文件夹下用文件夹名以区分,不同模型支持的功能不同,请视具体情况予以区分。以CogVideoX-Fun为例。

|

| 237 |

+

|

| 238 |

+

- 步骤1:下载对应[权重](#model-zoo)放入models文件夹。

|

| 239 |

+

- 步骤2:运行examples/cogvideox_fun/app.py文件,进入gradio页面。

|

| 240 |

+

- 步骤3:根据页面选择生成模型,填入prompt、neg_prompt、guidance_scale和seed等,点击生成,等待生成结果,结果保存在sample文件夹中。

|

| 241 |

+

|

| 242 |

+

# 参考文献

|

| 243 |

+

- CogVideo: https://github.com/THUDM/CogVideo/

|

| 244 |

+

- EasyAnimate: https://github.com/aigc-apps/EasyAnimate

|

| 245 |

+

- Wan2.1: https://github.com/Wan-Video/Wan2.1/

|

| 246 |

+

|

| 247 |

+

# 许可证

|

| 248 |

+

本项目采用 [Apache License (Version 2.0)](https://github.com/modelscope/modelscope/blob/master/LICENSE).

|

| 249 |

+

|

README_en.md

ADDED

|

@@ -0,0 +1,249 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: apache-2.0

|

| 3 |

+

language:

|

| 4 |

+

- en

|

| 5 |

+

- zh

|

| 6 |

+

pipeline_tag: text-to-video

|

| 7 |

+

library_name: diffusers

|

| 8 |

+

tags:

|

| 9 |

+

- video

|

| 10 |

+

- video-generation

|

| 11 |

+

---

|

| 12 |

+

|

| 13 |

+

# Wan-Fun

|

| 14 |

+

|

| 15 |

+

😊 Welcome!

|

| 16 |

+

|

| 17 |

+

[](https://huggingface.co/spaces/alibaba-pai/Wan2.1-Fun-1.3B-InP)

|

| 18 |

+

|

| 19 |

+

[](https://github.com/aigc-apps/VideoX-Fun)

|

| 20 |

+

|

| 21 |

+

[English](./README_en.md) | [简体中文](./README.md)

|

| 22 |

+

|

| 23 |

+

# Table of Contents

|

| 24 |

+

- [Table of Contents](#table-of-contents)

|

| 25 |

+

- [Model zoo](#model-zoo)

|

| 26 |

+

- [Video Result](#video-result)

|

| 27 |

+

- [Quick Start](#quick-start)

|

| 28 |

+

- [How to use](#how-to-use)

|

| 29 |

+

- [Reference](#reference)

|

| 30 |

+

- [License](#license)

|

| 31 |

+

|

| 32 |

+

# Model zoo

|

| 33 |

+

V1.0:

|

| 34 |

+

| Name | Storage Space | Hugging Face | Model Scope | Description |

|

| 35 |

+

|--|--|--|--|--|

|

| 36 |

+

| Wan2.1-Fun-1.3B-InP | 19.0 GB | [🤗Link](https://huggingface.co/alibaba-pai/Wan2.1-Fun-1.3B-InP) | [😄Link](https://modelscope.cn/models/PAI/Wan2.1-Fun-1.3B-InP) | Wan2.1-Fun-1.3B text-to-video weights, trained at multiple resolutions, supporting start and end frame prediction. |

|

| 37 |

+

| Wan2.1-Fun-14B-InP | 47.0 GB | [🤗Link](https://huggingface.co/alibaba-pai/Wan2.1-Fun-14B-InP) | [😄Link](https://modelscope.cn/models/PAI/Wan2.1-Fun-14B-InP) | Wan2.1-Fun-14B text-to-video weights, trained at multiple resolutions, supporting start and end frame prediction. |

|

| 38 |

+

| Wan2.1-Fun-1.3B-Control | 19.0 GB | [🤗Link](https://huggingface.co/alibaba-pai/Wan2.1-Fun-1.3B-Control) | [😄Link](https://modelscope.cn/models/PAI/Wan2.1-Fun-1.3B-Control) | Wan2.1-Fun-1.3B video control weights, supporting various control conditions such as Canny, Depth, Pose, MLSD, etc., and trajectory control. Supports multi-resolution (512, 768, 1024) video prediction at 81 frames, trained at 16 frames per second, with multilingual prediction support. |

|

| 39 |

+

| Wan2.1-Fun-14B-Control | 47.0 GB | [🤗Link](https://huggingface.co/alibaba-pai/Wan2.1-Fun-14B-Control) | [😄Link](https://modelscope.cn/models/PAI/Wan2.1-Fun-14B-Control) | Wan2.1-Fun-14B video control weights, supporting various control conditions such as Canny, Depth, Pose, MLSD, etc., and trajectory control. Supports multi-resolution (512, 768, 1024) video prediction at 81 frames, trained at 16 frames per second, with multilingual prediction support. |

|

| 40 |

+

|

| 41 |

+

# Video Result

|

| 42 |

+

|

| 43 |

+

### Wan2.1-Fun-14B-InP && Wan2.1-Fun-1.3B-InP

|

| 44 |

+

|

| 45 |

+

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 46 |

+

<tr>

|

| 47 |

+

<td>

|

| 48 |

+

<video src="https://github.com/user-attachments/assets/bd72a276-e60e-4b5d-86c1-d0f67e7425b9" width="100%" controls autoplay loop></video>

|

| 49 |

+

</td>

|

| 50 |

+

<td>

|

| 51 |

+

<video src="https://github.com/user-attachments/assets/cb7aef09-52c2-4973-80b4-b2fb63425044" width="100%" controls autoplay loop></video>

|

| 52 |

+

</td>

|

| 53 |

+

<td>

|

| 54 |

+

<video src="https://github.com/user-attachments/assets/4e10d491-f1cf-4b08-a7c5-1e01e5418140" width="100%" controls autoplay loop></video>

|

| 55 |

+

</td>

|

| 56 |

+

<td>

|

| 57 |

+

<video src="https://github.com/user-attachments/assets/f7e363a9-be09-4b72-bccf-cce9c9ebeb9b" width="100%" controls autoplay loop></video>

|

| 58 |

+

</td>

|

| 59 |

+

</tr>

|

| 60 |

+

</table>

|

| 61 |

+

|

| 62 |

+

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 63 |

+

<tr>

|

| 64 |

+

<td>

|

| 65 |

+

<video src="https://github.com/user-attachments/assets/28f3e720-8acc-4f22-a5d0-ec1c571e9466" width="100%" controls autoplay loop></video>

|

| 66 |

+

</td>

|

| 67 |

+

<td>

|

| 68 |

+

<video src="https://github.com/user-attachments/assets/fb6e4cb9-270d-47cd-8501-caf8f3e91b5c" width="100%" controls autoplay loop></video>

|

| 69 |

+

</td>

|

| 70 |

+

<td>

|

| 71 |

+

<video src="https://github.com/user-attachments/assets/989a4644-e33b-4f0c-b68e-2ff6ba37ac7e" width="100%" controls autoplay loop></video>

|

| 72 |

+

</td>

|

| 73 |

+

<td>

|

| 74 |

+

<video src="https://github.com/user-attachments/assets/9c604fa7-8657-49d1-8066-b5bb198b28b6" width="100%" controls autoplay loop></video>

|

| 75 |

+

</td>

|

| 76 |

+

</tr>

|

| 77 |

+

</table>

|

| 78 |

+

|

| 79 |

+

### Wan2.1-Fun-14B-Control && Wan2.1-Fun-1.3B-Control

|

| 80 |

+

|

| 81 |

+

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 82 |

+

<tr>

|

| 83 |

+

<td>

|

| 84 |

+

<video src="https://github.com/user-attachments/assets/f35602c4-9f0a-4105-9762-1e3a88abbac6" width="100%" controls autoplay loop></video>

|

| 85 |

+

</td>

|

| 86 |

+

<td>

|

| 87 |

+

<video src="https://github.com/user-attachments/assets/8b0f0e87-f1be-4915-bb35-2d53c852333e" width="100%" controls autoplay loop></video>

|

| 88 |

+

</td>

|

| 89 |

+

<td>

|

| 90 |

+

<video src="https://github.com/user-attachments/assets/972012c1-772b-427a-bce6-ba8b39edcfad" width="100%" controls autoplay loop></video>

|

| 91 |

+

</td>

|

| 92 |

+

<tr>

|

| 93 |

+

</table>

|

| 94 |

+

|

| 95 |

+

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 96 |

+

<tr>

|

| 97 |

+

<td>

|

| 98 |

+

<video src="https://github.com/user-attachments/assets/53002ce2-dd18-4d4f-8135-b6f68364cabd" width="100%" controls autoplay loop></video>

|

| 99 |

+

</td>

|

| 100 |

+

<td>

|

| 101 |

+

<video src="https://github.com/user-attachments/assets/a1a07cf8-d86d-4cd2-831f-18a6c1ceee1d" width="100%" controls autoplay loop></video>

|

| 102 |

+

</td>

|

| 103 |

+

<td>

|

| 104 |

+

<video src="https://github.com/user-attachments/assets/3224804f-342d-4947-918d-d9fec8e3d273" width="100%" controls autoplay loop></video>

|

| 105 |

+

</td>

|

| 106 |

+

<tr>

|

| 107 |

+

<td>

|

| 108 |

+

<video src="https://github.com/user-attachments/assets/c6c5d557-9772-483e-ae47-863d8a26db4a" width="100%" controls autoplay loop></video>

|

| 109 |

+

</td>

|

| 110 |

+

<td>

|

| 111 |

+

<video src="https://github.com/user-attachments/assets/af617971-597c-4be4-beb5-f9e8aaca2d14" width="100%" controls autoplay loop></video>

|

| 112 |

+

</td>

|

| 113 |

+

<td>

|

| 114 |

+

<video src="https://github.com/user-attachments/assets/8411151e-f491-4264-8368-7fc3c5a6992b" width="100%" controls autoplay loop></video>

|

| 115 |

+

</td>

|

| 116 |

+

</tr>

|

| 117 |

+

</table>

|

| 118 |

+

|

| 119 |

+

# Quick Start

|

| 120 |

+

### 1. Cloud usage: AliyunDSW/Docker

|

| 121 |

+

#### a. From AliyunDSW

|

| 122 |

+

DSW has free GPU time, which can be applied once by a user and is valid for 3 months after applying.

|

| 123 |

+

|

| 124 |

+

Aliyun provide free GPU time in [Freetier](https://free.aliyun.com/?product=9602825&crowd=enterprise&spm=5176.28055625.J_5831864660.1.e939154aRgha4e&scm=20140722.M_9974135.P_110.MO_1806-ID_9974135-MID_9974135-CID_30683-ST_8512-V_1), get it and use in Aliyun PAI-DSW to start CogVideoX-Fun within 5min!

|

| 125 |

+

|

| 126 |

+

[](https://gallery.pai-ml.com/#/preview/deepLearning/cv/cogvideox_fun)

|

| 127 |

+

|

| 128 |

+

#### b. From ComfyUI

|

| 129 |

+

Our ComfyUI is as follows, please refer to [ComfyUI README](comfyui/README.md) for details.

|

| 130 |

+

|

| 131 |

+

|

| 132 |

+

#### c. From docker

|

| 133 |

+

If you are using docker, please make sure that the graphics card driver and CUDA environment have been installed correctly in your machine.

|

| 134 |

+

|

| 135 |

+

Then execute the following commands in this way:

|

| 136 |

+

|

| 137 |

+

```

|

| 138 |

+

# pull image

|

| 139 |

+

docker pull mybigpai-public-registry.cn-beijing.cr.aliyuncs.com/easycv/torch_cuda:cogvideox_fun

|

| 140 |

+

|

| 141 |

+

# enter image

|

| 142 |

+

docker run -it -p 7860:7860 --network host --gpus all --security-opt seccomp:unconfined --shm-size 200g mybigpai-public-registry.cn-beijing.cr.aliyuncs.com/easycv/torch_cuda:cogvideox_fun

|

| 143 |

+

|

| 144 |

+

# clone code

|

| 145 |

+

git clone https://github.com/aigc-apps/CogVideoX-Fun.git

|

| 146 |

+

|

| 147 |

+

# enter CogVideoX-Fun's dir

|

| 148 |

+

cd CogVideoX-Fun

|

| 149 |

+

|

| 150 |

+

# download weights

|

| 151 |

+

mkdir models/Diffusion_Transformer

|

| 152 |

+

mkdir models/Personalized_Model

|

| 153 |

+

|

| 154 |

+

# Please use the hugginface link or modelscope link to download the model.

|

| 155 |

+

# CogVideoX-Fun

|

| 156 |

+

# https://huggingface.co/alibaba-pai/CogVideoX-Fun-V1.1-5b-InP

|

| 157 |

+

# https://modelscope.cn/models/PAI/CogVideoX-Fun-V1.1-5b-InP

|

| 158 |

+

|

| 159 |

+

# Wan

|

| 160 |

+

# https://huggingface.co/alibaba-pai/Wan2.1-Fun-14B-InP

|

| 161 |

+

# https://modelscope.cn/models/PAI/Wan2.1-Fun-14B-InP

|

| 162 |

+

```

|

| 163 |

+

|

| 164 |

+

### 2. Local install: Environment Check/Downloading/Installation

|

| 165 |

+

#### a. Environment Check

|

| 166 |

+

We have verified this repo execution on the following environment:

|

| 167 |

+

|

| 168 |

+

The detailed of Windows:

|

| 169 |

+

- OS: Windows 10

|

| 170 |

+

- python: python3.10 & python3.11

|

| 171 |

+

- pytorch: torch2.2.0

|

| 172 |

+

- CUDA: 11.8 & 12.1

|

| 173 |

+

- CUDNN: 8+

|

| 174 |

+

- GPU: Nvidia-3060 12G & Nvidia-3090 24G

|

| 175 |

+

|

| 176 |

+

The detailed of Linux:

|

| 177 |

+

- OS: Ubuntu 20.04, CentOS

|

| 178 |

+

- python: python3.10 & python3.11

|

| 179 |

+

- pytorch: torch2.2.0

|

| 180 |

+

- CUDA: 11.8 & 12.1

|

| 181 |

+

- CUDNN: 8+

|

| 182 |

+

- GPU:Nvidia-V100 16G & Nvidia-A10 24G & Nvidia-A100 40G & Nvidia-A100 80G

|

| 183 |

+

|

| 184 |

+

We need about 60GB available on disk (for saving weights), please check!

|

| 185 |

+

|

| 186 |

+

#### b. Weights

|

| 187 |

+

We'd better place the [weights](#model-zoo) along the specified path:

|

| 188 |

+

|

| 189 |

+

```

|

| 190 |

+

📦 models/

|

| 191 |

+

├── 📂 Diffusion_Transformer/

|

| 192 |

+

│ ├── 📂 CogVideoX-Fun-V1.1-2b-InP/

|

| 193 |

+

│ ├── 📂 CogVideoX-Fun-V1.1-5b-InP/

|

| 194 |

+

│ ├── 📂 Wan2.1-Fun-14B-InP

|

| 195 |

+

│ └── 📂 Wan2.1-Fun-1.3B-InP/

|

| 196 |

+

├── 📂 Personalized_Model/

|

| 197 |

+

│ └── your trained trainformer model / your trained lora model (for UI load)

|

| 198 |

+

```

|

| 199 |

+

|

| 200 |

+

# How to Use

|

| 201 |

+

|

| 202 |

+

<h3 id="video-gen">1. Generation</h3>

|

| 203 |

+

|

| 204 |

+

#### a. GPU Memory Optimization

|

| 205 |

+

Since Wan2.1 has a very large number of parameters, we need to consider memory optimization strategies to adapt to consumer-grade GPUs. We provide `GPU_memory_mode` for each prediction file, allowing you to choose between `model_cpu_offload`, `model_cpu_offload_and_qfloat8`, and `sequential_cpu_offload`. This solution is also applicable to CogVideoX-Fun generation.

|

| 206 |

+

|

| 207 |

+

- `model_cpu_offload`: The entire model is moved to the CPU after use, saving some GPU memory.

|

| 208 |

+

- `model_cpu_offload_and_qfloat8`: The entire model is moved to the CPU after use, and the transformer model is quantized to float8, saving more GPU memory.

|

| 209 |

+

- `sequential_cpu_offload`: Each layer of the model is moved to the CPU after use. It is slower but saves a significant amount of GPU memory.

|

| 210 |

+

|

| 211 |

+

`qfloat8` may slightly reduce model performance but saves more GPU memory. If you have sufficient GPU memory, it is recommended to use `model_cpu_offload`.

|

| 212 |

+

|

| 213 |

+

#### b. Using ComfyUI

|

| 214 |

+

For details, refer to [ComfyUI README](comfyui/README.md).

|

| 215 |

+

|

| 216 |

+

#### c. Running Python Files

|

| 217 |

+

- **Step 1**: Download the corresponding [weights](#model-zoo) and place them in the `models` folder.

|

| 218 |

+

- **Step 2**: Use different files for prediction based on the weights and prediction goals. This library currently supports CogVideoX-Fun, Wan2.1, and Wan2.1-Fun. Different models are distinguished by folder names under the `examples` folder, and their supported features vary. Use them accordingly. Below is an example using CogVideoX-Fun:

|

| 219 |

+

- **Text-to-Video**:

|

| 220 |

+

- Modify `prompt`, `neg_prompt`, `guidance_scale`, and `seed` in the file `examples/cogvideox_fun/predict_t2v.py`.

|

| 221 |

+

- Run the file `examples/cogvideox_fun/predict_t2v.py` and wait for the results. The generated videos will be saved in the folder `samples/cogvideox-fun-videos`.

|

| 222 |

+

- **Image-to-Video**:

|

| 223 |

+

- Modify `validation_image_start`, `validation_image_end`, `prompt`, `neg_prompt`, `guidance_scale`, and `seed` in the file `examples/cogvideox_fun/predict_i2v.py`.

|

| 224 |

+

- `validation_image_start` is the starting image of the video, and `validation_image_end` is the ending image of the video.

|

| 225 |

+

- Run the file `examples/cogvideox_fun/predict_i2v.py` and wait for the results. The generated videos will be saved in the folder `samples/cogvideox-fun-videos_i2v`.

|

| 226 |

+

- **Video-to-Video**:

|

| 227 |

+

- Modify `validation_video`, `validation_image_end`, `prompt`, `neg_prompt`, `guidance_scale`, and `seed` in the file `examples/cogvideox_fun/predict_v2v.py`.

|

| 228 |

+

- `validation_video` is the reference video for video-to-video generation. You can use the following demo video: [Demo Video](https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/cogvideox_fun/asset/v1/play_guitar.mp4).

|

| 229 |

+

- Run the file `examples/cogvideox_fun/predict_v2v.py` and wait for the results. The generated videos will be saved in the folder `samples/cogvideox-fun-videos_v2v`.

|

| 230 |

+

- **Controlled Video Generation (Canny, Pose, Depth, etc.)**:

|

| 231 |

+

- Modify `control_video`, `validation_image_end`, `prompt`, `neg_prompt`, `guidance_scale`, and `seed` in the file `examples/cogvideox_fun/predict_v2v_control.py`.

|

| 232 |

+

- `control_video` is the control video extracted using operators such as Canny, Pose, or Depth. You can use the following demo video: [Demo Video](https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/cogvideox_fun/asset/v1.1/pose.mp4).

|

| 233 |

+

- Run the file `examples/cogvideox_fun/predict_v2v_control.py` and wait for the results. The generated videos will be saved in the folder `samples/cogvideox-fun-videos_v2v_control`.

|

| 234 |

+

- **Step 3**: If you want to integrate other backbones or Loras trained by yourself, modify `lora_path` and relevant paths in `examples/{model_name}/predict_t2v.py` or `examples/{model_name}/predict_i2v.py` as needed.

|

| 235 |

+

|

| 236 |

+

#### d. Using the Web UI

|

| 237 |

+

The web UI supports text-to-video, image-to-video, video-to-video, and controlled video generation (Canny, Pose, Depth, etc.). This library currently supports CogVideoX-Fun, Wan2.1, and Wan2.1-Fun. Different models are distinguished by folder names under the `examples` folder, and their supported features vary. Use them accordingly. Below is an example using CogVideoX-Fun:

|

| 238 |

+

|

| 239 |

+

- **Step 1**: Download the corresponding [weights](#model-zoo) and place them in the `models` folder.

|

| 240 |

+

- **Step 2**: Run the file `examples/cogvideox_fun/app.py` to access the Gradio interface.

|

| 241 |

+

- **Step 3**: Select the generation model on the page, fill in `prompt`, `neg_prompt`, `guidance_scale`, and `seed`, click "Generate," and wait for the results. The generated videos will be saved in the `sample` folder.

|

| 242 |

+

|

| 243 |

+

# Reference

|

| 244 |

+

- CogVideo: https://github.com/THUDM/CogVideo/

|

| 245 |

+

- EasyAnimate: https://github.com/aigc-apps/EasyAnimate

|

| 246 |

+

- Wan2.1: https://github.com/Wan-Video/Wan2.1/

|

| 247 |

+

|

| 248 |

+

# License

|

| 249 |

+